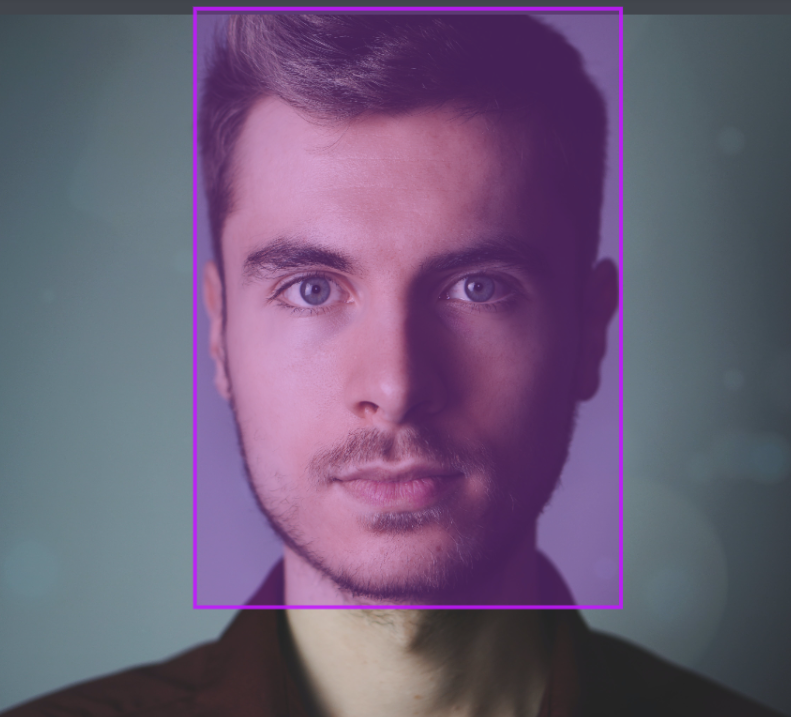

Tight Bounding Boxes in Facial Recognition Technology

Accurate and precise object detection is crucial in facial recognition technology. One of the key components in achieving this accuracy is the use of tight bounding boxes. These bounding boxes play a vital role in identifying and localizing facial features, enabling the system to accurately classify and recognize individuals.

A close-up of a person's face being scanned by a small device with a red laser. The device is surrounded by intricate circuit lines and symbols, giving it a futuristic feel. The person's face is partially obscured by a tight bounding box, emphasizing the precision and accuracy of the facial recognition technology being used. The background is dark, suggesting that this is happening in a high-security environment.

Implementing tight bounding boxes can be challenging, but it is essential to ensure that the boundaries of the detected objects are precisely defined. By maintaining a high Intersection over Union (IoU) score, reducing overlap, and annotating occluded objects, the system can achieve pixel-perfect tightness in the bounding boxes.

Key Takeaways:

- Tight bounding boxes are crucial for accurate object detection in facial recognition technology.

- Challenges include achieving pixel-perfect tightness, reducing overlap, and annotating occluded objects.

- Best practices include maintaining a high Intersection over Union (IoU), labeling and tagging all objects, and varying box sizes based on object size.

- Bounding boxes play a crucial role in object detection, image processing, and various computer vision and machine learning applications.

- Accurate and precise bounding box annotation is essential for object detection and computer vision tasks.

What is Bounding Box Annotation?

Bounding box annotation is a fundamental technique used in computer vision and machine learning applications for object detection and image recognition algorithms. It involves manually labeling or annotating an image with a rectangular box that encloses a specific object or feature of interest. This annotated bounding box defines the coordinates of the object and is accompanied by a class label, providing valuable information for training image recognition algorithms and conducting various computer vision tasks.

Bounding box annotation serves as a crucial tool for object detection, enabling accurate localization and identification of objects within an image. By outlining the boundaries of objects, it aids in training machine learning models to recognize and classify different objects, contributing to the development of intelligent systems in various domains. This technique finds applications in areas such as autonomous driving, surveillance systems, medical imaging, and more.

The process of bounding box annotation requires human input and expertise to ensure accurate and precise annotations. It plays a vital role in enhancing the performance and accuracy of computer vision tasks, allowing machines to understand and interpret visual data more effectively.

| Type | Description |

|---|---|

| Axis-aligned bounding boxes (AABBs) | Rectangular boxes aligned with the axes (x and y) of the image, widely used for object detection. |

| Minimum bounding boxes (MBBs) | Bounding boxes that tightly fit the object by aligning the box with its orientation, providing a more accurate representation of the object's boundaries. |

| Rotated bounding boxes | Bounding boxes that accommodate objects with irregular shapes or orientations, providing a more precise fit. |

| Oriented bounding boxes | Bounding boxes that align with the object's orientation, enabling accurate detection and recognition for objects with varying angles. |

Image Annotation Techniques

Image annotation techniques play a crucial role in computer vision tasks. In addition to bounding box annotation, there are several other techniques commonly used to label and annotate images. These techniques include polygon annotation, segmentation annotation, and landmark annotation.

Polygon annotation involves manually drawing polygons around objects of interest instead of using rectangular bounding boxes. This technique is useful for objects with irregular shapes or for providing more precise annotations.

Segmentation annotation, on the other hand, involves labeling individual pixels or groups of pixels to differentiate between different objects or regions within an image. This technique is often used when there is a need to identify and classify multiple objects within a single image.

Landmark annotation is another important technique used in image annotation. It involves identifying and labeling specific points or landmarks on an object. This technique is commonly used in tasks such as facial recognition, where key facial features need to be accurately identified and annotated.

Each of these techniques has its own advantages and use cases, and the choice of technique depends on the specific requirements of the image annotation task. Whether it's bounding box annotation, polygon annotation, segmentation annotation, or landmark annotation, these techniques are essential for training machine learning models and improving object detection and classification in computer vision applications.

Create an image of a group of people working on facial recognition technology, with a focus on the process of tight bounding box annotation. Show different techniques and tools being used to accurately annotate facial features and ensure tight bounding boxes around faces. Use colors that represent precision and attention to detail, and include examples of before-and-after annotations to demonstrate the impact of accurate bounding boxes on the quality of the facial recognition system.

| Image Annotation Technique | Use Case |

|---|---|

| Polygon Annotation | Objects with irregular shapes |

| Segmentation Annotation | Multiple objects or regions within an image |

| Landmark Annotation | Facial recognition, key feature identification |

Why Are Bounding Boxes Important?

Bounding boxes are a fundamental element in object detection, image annotation, data visualization, and object recognition. These bounding boxes are rectangular enclosures that help identify and localize objects within an image or video, enabling various computer vision tasks. By outlining the boundaries of objects, bounding boxes facilitate tasks such as image classification, object tracking, and face detection.

One of the key reasons why bounding boxes are important is that they provide valuable information for building image databases and training machine learning algorithms. When bounding boxes are accurately labeled and annotated, they contribute to the development of robust algorithms that can efficiently recognize and classify objects. This is essential in applications such as autonomous driving, surveillance systems, and medical imaging, where accurate identification and localization of objects are critical.

Bounding boxes also play a crucial role in data visualization, as they provide a visual representation of object positions and sizes within an image. This aids in understanding and interpreting visual data, allowing researchers and practitioners to analyze and extract meaningful insights from images. Moreover, bounding boxes enable the comparison and analysis of object positions and sizes across different images, further enhancing data exploration and analysis.

Table: Applications of Bounding Boxes

| Application | Description |

|---|---|

| Object Detection | Allows the identification and localization of objects within images or videos. |

| Image Annotation | Enables the labeling and tagging of objects for machine learning algorithms. |

| Data Visualization | Provides a visual representation of object positions and sizes. |

| Object Recognition | Aids in the development of algorithms for recognizing and classifying objects. |

In summary, bounding boxes are important because they enable object detection, image annotation, data visualization, and object recognition. They provide valuable information for training machine learning algorithms and help visualize and interpret visual data. Bounding boxes are crucial in various applications and play a vital role in improving the accuracy and efficiency of computer vision systems.

Types of Bounding Box Annotation

When it comes to bounding box annotation, there are various types that can be used depending on the specific application and characteristics of the objects being enclosed. These types include:

1. Axis-Aligned Bounding Boxes (AABBs)

Axis-aligned bounding boxes align with the axes (x and y) of the image and are widely used in object detection tasks. They are rectangular in shape and do not account for the orientation of the object. AABBs provide a simple and efficient way to enclose objects within an image.

2. Minimum Bounding Boxes (MBBs)

Minimum bounding boxes tightly fit the object by aligning the box with its orientation, providing a more accurate representation of the object's boundaries. This type of annotation is particularly useful for objects with irregular shapes or orientations.

3. Rotated Bounding Boxes

Rotated bounding boxes accommodate objects with irregular shapes or orientations, providing a more precise fit. They capture the object's rotation and accurately enclose the object within the image.

4. Oriented Bounding Boxes

Oriented bounding boxes also account for the object's rotation and orientation. They provide a more accurate representation of the object's boundaries, especially for objects with complex shapes.

5. Minimum Volume Bounding Boxes

Minimum volume bounding boxes aim to enclose the object with the minimum possible volume. They provide a tight fit and can be useful for objects with irregular shapes and varying sizes.

6. Convex Hull Bounding Boxes

Convex hull bounding boxes are created by connecting the outermost points of a set of objects. They are useful for objects with complex shapes and provide a bounding box that closely follows the object's contour.

These different types of bounding box annotation offer flexibility in accurately enclosing objects within images, catering to various applications and object characteristics.

"Three different types of bounding boxes used in facial recognition technology, each with distinct shapes and colors."

| Type | Advantages | Disadvantages |

|---|---|---|

| Axis-Aligned Bounding Boxes (AABBs) | - Simple and efficient - Widely used in object detection tasks | - Do not account for object orientation |

| Minimum Bounding Boxes (MBBs) | - Tightly fit objects based on orientation - Accurate representation of object boundaries | - More complex annotation process compared to AABBs |

| Rotated Bounding Boxes | - Accommodate objects with irregular shapes or orientations - Provide precise fit | - More complex annotation process compared to AABBs and MBBs |

| Oriented Bounding Boxes | - Account for object rotation and orientation - Accurate representation of object boundaries | - More complex annotation process compared to AABBs and MBBs |

| Minimum Volume Bounding Boxes | - Enclose object with minimum volume - Tight fit for irregular shapes and sizes | - More complex annotation process compared to AABBs and MBBs |

| Convex Hull Bounding Boxes | - Follow object contour closely - Useful for objects with complex shapes | - May enclose unnecessary space for certain objects |

Best Practices for Bounding Box Annotation

Accurate and precise bounding box annotation is crucial for object detection and computer vision tasks. Implementing best practices can significantly enhance the effectiveness and accuracy of bounding box annotation. The following practices are recommended:

Tightness and Pixel-Perfect Annotation

Ensuring tightness in bounding boxes is essential for accurately capturing object boundaries. This involves aligning the bounding boxes as closely as possible to the actual object edges. Achieving pixel-perfect tightness is particularly important for complex objects with intricate shapes or fine details. By meticulously annotating bounding boxes, researchers and practitioners can improve the accuracy of object recognition algorithms.

Avoiding Overlap

Overlap between bounding boxes can lead to confusion and misclassification. It is crucial to avoid overlapping bounding boxes by carefully adjusting their positions and sizes. This practice enables clear delineation of individual objects within an image and improves the reliability of object detection algorithms.

Labeling and Tagging

Properly labeling and tagging bounding boxes is essential for data organization and analysis. Each bounding box should be labeled and tagged with the appropriate class name or label. This practice allows for efficient data management and enables accurate classification and recognition of objects within an image.

Varying Box Sizes

Varying the sizes of bounding boxes based on the size of the object being annotated can improve annotation accuracy. Larger objects may require larger bounding boxes to accurately capture their boundaries, while smaller objects may require smaller bounding boxes. Adapting the size of the bounding boxes to match the size of the objects being annotated can enhance object recognition and classification algorithms.

| Best Practice | Description |

|---|---|

| Tightness and Pixel-Perfect Annotation | Aligning bounding boxes closely to object edges for accurate object boundary representation. |

| Avoiding Overlap | Preventing overlap between bounding boxes to ensure clear delineation of objects. |

| Labeling and Tagging | Appropriately labeling and tagging bounding boxes for efficient data management and accurate classification. |

| Varying Box Sizes | Adjusting the size of bounding boxes based on the size of the objects being annotated for improved accuracy. |

By following these best practices, researchers and practitioners can optimize their bounding box annotation processes, leading to more reliable and accurate object detection and recognition in computer vision and AI applications.

Image Annotation in Computer Vision and AI

Image annotation is a crucial aspect of computer vision and AI, enabling machines to recognize and classify objects within digital images. It involves labeling objects within an image using various techniques such as image labeling, object annotation, and image tagging. These techniques help improve image recognition, object detection, and visual search, making them invaluable in the field of computer vision.

With the advancements in machine learning, image annotation has become even more important. By providing annotated data, machines can learn to identify and interpret objects accurately, enabling them to perform complex tasks such as autonomous driving, surveillance, and medical diagnosis. Image annotation also plays a vital role in training deep learning models, allowing them to analyze vast amounts of visual data and make accurate predictions.

There are different techniques and methods used in image annotation, including bounding box annotation, polygon annotation, and segmentation annotation. Each technique has its strengths and is suitable for different scenarios. Bounding box annotation, in particular, is widely used due to its simplicity and effectiveness. It provides a straightforward way to define the position and boundaries of an object within an image, making it an essential tool in object detection and classification tasks.

| Technique | Advantages | Applications |

|---|---|---|

| Bounding Box Annotation | Simple, precise, widely supported | Object detection, image classification, face recognition |

| Polygon Annotation | Accurate representation of object shape | Objects with irregular shapes or contours |

| Segmentation Annotation | Pixel-level labeling and differentiation | Image segmentation, object tracking |

| Landmark Annotation | Identification of specific points or landmarks | Facial recognition, object alignment |

Image annotation is extensively applied in various industries, including healthcare, manufacturing, e-commerce, and robotics. In healthcare, it aids in medical imaging analysis, disease diagnosis, and treatment planning. In manufacturing, it improves quality control, defect detection, and product inspection. In e-commerce, it enhances visual search, product recommendation, and virtual try-on experiences. And in robotics, it enables machines to perceive their surroundings and interact with the environment effectively.

In conclusion, image annotation is a fundamental technique in computer vision and AI. It empowers machines to understand and interpret visual data, paving the way for advancements in various fields. By accurately labeling objects within images, machines can learn to recognize and classify objects, enabling them to perform complex tasks and make informed decisions. With the continued development of AI and machine learning, image annotation will continue to play a pivotal role in shaping the future of computer vision.

Bounding Boxes in Image Processing

When it comes to image processing, bounding boxes play a crucial role in defining the borders of objects within an image. They provide valuable information for object detection and help create collision boxes for objects. Data annotators utilize the concept of bounding boxes to draw imaginary rectangles over objects in images, defining their position and location with X and Y coordinates. This simplifies the task of machine learning algorithms in finding collision paths and optimizing computing resources.

"Bounding boxes are an efficient and robust technique in image annotation that reduces costs and enhances object detection accuracy."

By precisely enclosing the objects of interest, bounding boxes assist in accurately localizing and identifying specific elements within an image. For example, in autonomous driving applications, bounding boxes help detect and track vehicles, pedestrians, and other obstacles on the road. Similarly, in medical imaging, bounding boxes aid in identifying and analyzing specific areas of interest, such as tumors or abnormalities.

To illustrate the importance of bounding boxes in image processing, let's consider an example of a collision avoidance system. The system relies on bounding boxes to detect potential collisions by analyzing the position and trajectory of objects in real-time. By comparing bounding boxes and their coordinates, the system can determine if any objects are on a collision path and take appropriate action to avoid accidents.

In conclusion, bounding boxes are integral to image processing and object detection. They enable accurate identification and localization of objects, optimize computing resources, and enhance the performance of machine learning algorithms. Utilizing bounding boxes in data annotation ensures precise and reliable results, benefiting various industries such as autonomous driving, surveillance systems, and medical imaging.

Comparing Tight and Loose Bounding Boxes

When it comes to image annotation, the choice between tight and loose bounding boxes can have a significant impact on the accuracy and flexibility of object recognition. Tight bounding boxes offer a higher level of precision in delineating objects, ensuring that the boundaries are accurately captured. This level of accuracy improves the overall object recognition performance, especially in applications where precise localization is essential.

On the other hand, loose bounding boxes provide more flexibility in accommodating varying object sizes and complex backgrounds. They allow for a less restrictive approach, which can be beneficial in scenarios where objects have irregular shapes or are located within cluttered environments. Loose bounding boxes offer a greater degree of tolerance, enabling improved object recognition in diverse images.

“The choice between tight and loose bounding boxes ultimately depends on the specific requirements of the annotation task and the characteristics of the images being annotated.”

By carefully considering the specific needs of the application, researchers and practitioners can determine whether tight or loose bounding boxes are more suitable for achieving the desired accuracy and flexibility. It is important to strike a balance between precision and adaptability to maximize the effectiveness of object recognition algorithms.

| Feature | Tight Bounding Boxes | Loose Bounding Boxes |

|---|---|---|

| Accuracy | Highly accurate in capturing object boundaries | Provides flexibility, which may result in slightly lower accuracy |

| Object Size | Best suited for objects with regular shapes and sizes | Accommodates varying object sizes and irregular shapes |

| Complex Backgrounds | May struggle with objects against cluttered or complex backgrounds | Tolerates complex backgrounds and cluttered environments |

| Application | Precise object recognition and localization | Object recognition in diverse and challenging environments |

Ultimately, the choice between tight and loose bounding boxes should be based on the specific requirements of the annotation task and the characteristics of the images being annotated. Each approach has its advantages, and understanding their differences is crucial in achieving optimal object recognition accuracy and flexibility.

Conclusion

In conclusion, bounding boxes play a crucial role in image annotation and computer vision. They are essential for accurate object detection, localization, and interpretation within images, making them a fundamental component of various applications. Whether choosing tight or loose bounding boxes, researchers and practitioners must consider the specific requirements of the annotation task to enhance image recognition and data annotation. By following best practices, such as achieving tightness, maintaining a high Intersection over Union (IoU) score, and labeling and tagging each box, the effectiveness and efficiency of computer vision and machine learning systems can be greatly improved.

Furthermore, bounding boxes are not only important for image annotation but also for applications such as facial recognition technology and object recognition. They enable precise identification and classification of objects, contributing to the development of advanced technologies in various industries. With the right choice of bounding box technique and a thorough understanding of its applications, researchers and practitioners can leverage the power of image annotation to drive innovation and improve computer vision systems.

In summary, image annotation and bounding boxes are inseparable. They go hand in hand to enable machines to recognize and understand visual data. By implementing accurate and precise bounding box annotation techniques, we can unlock the full potential of computer vision, AI, and machine learning, leading to advancements in fields such as medicine, manufacturing, e-commerce, and robotics. Bounding boxes are not just rectangular shapes; they are the foundation upon which our digital world builds its understanding of the physical world.

FAQ

What are tight bounding boxes?

Tight bounding boxes are rectangular boxes that accurately capture the boundaries of objects in an image or video for precise object detection and classification.

Why are tight bounding boxes important in facial recognition technology?

Accurate and tight bounding boxes are crucial for precise object detection and classification in facial recognition technology as they help identify and locate faces with high accuracy.

What challenges are faced in implementing tight bounding boxes?

Challenges in implementing tight bounding boxes include achieving pixel-perfect tightness, reducing overlap between boxes, and accurately annotating occluded objects.

What are best practices for bounding box annotation?

Best practices for bounding box annotation include maintaining a high Intersection over Union (IoU) score, labeling and tagging all objects, and varying box sizes based on object size.

What are the different types of bounding box annotation?

The different types of bounding box annotation include axis-aligned bounding boxes, minimum bounding boxes, rotated bounding boxes, oriented bounding boxes, minimum volume bounding boxes, and convex hull bounding boxes.

How are bounding boxes used in computer vision and AI?

Bounding boxes play a vital role in object detection, image annotation, data visualization, and object recognition in computer vision and AI applications.

What role do bounding boxes play in image processing?

Bounding boxes define the borders of objects within an image and assist in object detection, collision box creation, and optimizing computing resources in image processing.

What is the difference between tight and loose bounding boxes?

Tight bounding boxes provide high precision in object delineation, while loose bounding boxes offer more flexibility in accommodating varying object sizes and complex backgrounds.

How do bounding boxes enhance image annotation accuracy?

Bounding boxes enable precise object detection, localization, and interpretation within images, enhancing accuracy in image recognition and data annotation.

Comments ()