Is it possible to build a more ethical technological world with artificial intelligence?

Robots may not injure humans or, through inaction, allowed to be harmed by robots. Robots must obey orders given by humans, except when such orders are in conflict with the First Law. In accordance with the First and Second Laws, robots must protect their own existence.

Eighty years ago, Isaac Asimov outlined the Three Laws of Robotics, long before artificial intelligence was a reality. However, they provide a perfect example of how humans have dealt with the ethical challenges posed by technology by protecting their users.

It should be noted, however, that the ethical challenges that humanity faces, whether they relate to technology or not, are more of a social issue than a technological one. In this regard, technology, and artificial intelligence in particular, could help us move toward a more ethically desirable world. We can rethink technology and artificial intelligence and use them to build a more ethical society by rethinking how we design them.

What is the objective nature of artificial intelligence?

In Asimov's day, the world was a very low-tech place in comparison with today. In 1942, Alan Turing had just completed formalizing the algorithmic concepts that would later play a vital role in the development of modern computing. In those days, computers and the internet were nonexistent, let alone artificial intelligence or autonomous robots.

The fear that humans would succeed in creating machines that were so intelligent that they would rebel against their creators was already anticipated by Asimov.

In the 1960s, however, these issues were not among the top concerns of science in the early days of computing and data technologies. Due to the objectiveness and scientific nature of the data, it was believed that the information resulting would be accurate and high quality. As with a mathematical calculation, it was derived from an algorithm.

As a consequence of artificial intelligence's objective nature, human bias was eliminated," said Joan Casas-Roma.

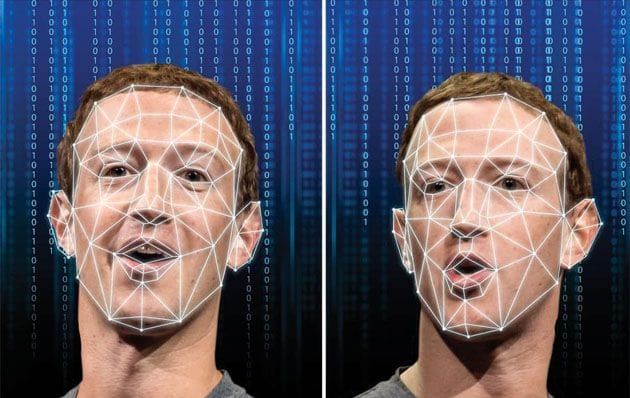

The situation was, however, quite different. As a result, it became evident that the data as well as the algorithms were replicating the model or worldview of the individual using the data or designing the system.

As a result, the technology did not eliminate human biases, but rather transferred them to a new medium. As we have learned over time, artificial intelligence is not necessarily objective, and as a result, its decisions can be highly biased. Consequently, these decisions tend to perpetuate inequalities rather than alleviate them.

As a result, we have reached the same point predicted by the Laws of Robotics. The topic of ethics and artificial intelligence was brought to the fore from a reactive and protective perspective. After realizing that artificial intelligence was neither fair nor objective, we took steps to minimize its negative effects. According to Casas-Roma, a shield must be constructed so that the adverse effects of technology on users are not perpetuated.

As a result of responding in this manner, he explains in the manifesto that over the past few decades we have failed to address a fundamental question in the relationship between technology and ethics: how can artificial intelligences with access to unprecedented amounts of data achieve ethically desirable outcomes?

Alternatively, how can technology assist us in building a future that is ethically desirable?

An idealistic relationship between ethics and technology

EU mid-term goals include moving toward a more inclusive, integrated, and cooperative society in which citizens have a better understanding of global challenges.

A major obstacle to achieving this goal may be technology and artificial intelligence, but they may also be a major ally. As a result of the way artificial intelligence is designed to interact with people, a more cooperative society may be promoted, according to Casas-Roma.

Online education has grown rapidly in recent years.

Despite the isolation, it is possible that technology may lead to greater cooperation and community building. Rather than only correcting exercises automatically, the system could also send a message to another student who has solved the problem, making it easier for students to assist each other.

The idea is to understand how technology can be used to facilitate the interaction of people in a manner that promotes community and cooperation," he noted.

An ethical idealist perspective, according to Casas-Roma, can review how technology can create new opportunities for the users themselves and community as a whole to benefit ethically. Ideally, this approach to the ethics of technology should include the following features:

- Expansive in scope. It is crucial that technology and its use are designed in a way that allows its users to flourish and become more capable.

- Idealistic. We must always keep in mind how technology could improve things as a whole.

- Providing enablement. It is important to understand and shape the possibilities created by technology in order to ensure that it contributes to enhancing and supporting the ethical growth of users and society.

- Adaptable. There is a possibility of reshaping both the social, political, economic and technological landscape, in order to achieve progress toward a new ideal state of affairs.

- Principle-driven. By using technology in this manner, we can enable and promote behaviors, interactions, and practices that are aligned with certain ethical values.

This is not so much about data or algorithms as it is about rethinking how we interact, how we wish to interact, what we enable through the use of technology that imposes itself as a medium, concluded Joan Casas-Roma.

Despite the fact that this idea suggests the power of technology, it is more concerned with how technology is designed, rather than the power of technology itself. A paradigm shift is required, a change of mindset is required. Technology's ethical consequences are not a technological problem, but rather a social one. Through the use of technology, they pose the question of how we interact with one another and with our surroundings."

Src: Universitat Oberta de Catalunya

Comments ()