Co-Writing with AI: How Assistance Can Impact Your Perspectives

New research suggests that artificial intelligence-powered writing assistants, which provide sentence autocompletion and "smart replies," not only influence the words people write but also shape their thoughts and ideas. Maurice Jakesch, a doctoral student in information science, conducted a study involving over 1,500 participants. They were asked to write a paragraph answering the question, "Is social media good for society?" The study found that individuals who used a biased AI writing assistant, either in favor of or against social media, were twice as likely to write a paragraph aligning with the assistant's bias. These participants also expressed a greater likelihood of holding the same opinion as the AI assistant compared to those who wrote without AI assistance.

The research highlights the potential repercussions of biases embedded in AI writing tools, whether intentional or unintentional, on culture and politics. The authors of the research stress on the need for a better recognizing of the implications before widespread implementation of these AI models across various domains. Co-author Mor Naaman, a professor at the Jacobs Technion-Cornell Institute, emphasizes that beyond increasing efficiency and creativity, AI writing tools could lead to language and opinion shifts with potential consequences for individuals and society.

While previous studies have examined how large language models like ChatGPT can generate persuasive ads and political messages, this research is the first to demonstrate that the process of writing with an AI-powered tool can influence a person's opinions. The study, titled "Co-Writing with Opinionated Language Models Affects Users' Views," was presented by Jakesch at the 2023 CHI Conference on Human Factors in Computing Systems.

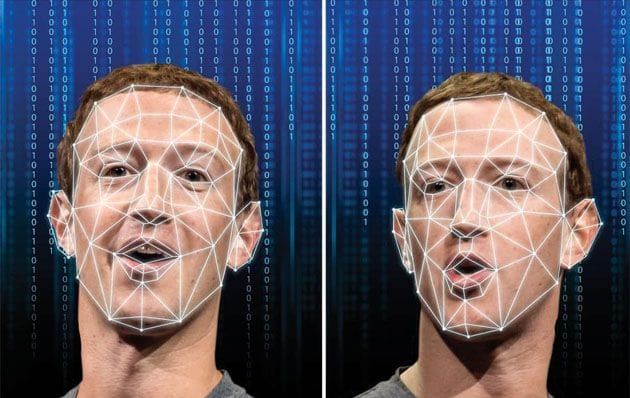

To investigate how people interact with AI writing assistants, Jakesch manipulated a large language model to express either positive or negative opinions about social media. Participants composed their paragraphs, either alone or with the assistance of the opinionated AI, on a platform resembling a social media website. The platform collected data on participants' interactions, such as their acceptance of AI suggestions and the time taken to compose the paragraph.

Participants who co-wrote with the pro-social media AI assistant produced more sentences favoring the notion that social media is beneficial, while those with the opposing AI assistant expressed more negative views, as determined by independent judges. Furthermore, these participants were more likely to adopt their assistant's opinion in a follow-up survey.

The researchers considered the possibility that participants simply accepted the AI suggestions to complete the task more quickly. However, even those who took several minutes to compose their paragraphs ended up with heavily influenced statements. Surprisingly, the majority of participants did not even realize the bias in the AI assistant and remained unaware of the influence exerted on them.

Co-writing with AI assistants did not feel like being persuaded to participants. It felt like a natural and organic process of expressing their own thoughts with some assistance, according to Naaman. The research team repeated the study with a different topic, and once again, participants demonstrated susceptibility to the AI assistants' influence. The team is now investigating the duration of these effects and how the experience leads to shifts in opinion.

Similar to how social media has altered the political landscape by facilitating the spread of misinformation and the formation of echo chambers, biased AI writing tools could also influence opinion shifts based on users' choice of tools. Some organizations have even announced plans to develop alternatives to ChatGPT with more conservative viewpoints.

Given the potential for misuse and the need for monitoring and regulation, the researchers stress the importance of public discussion surrounding these powerful technologies. As Jakesch points out, the more deeply embedded these technologies become in our societies, the more cautious we should be about governing the values, priorities, and opinions embedded within them.

Contributors to the paper include Advait Bhat from Microsoft Research, Daniel Buschek from the University of Bayreuth, and Lior Zalmanson of Tel Aviv University contributed to the paper.

Src: Cornell University

Comments ()