Optimizing GPU Performance for YOLOv8

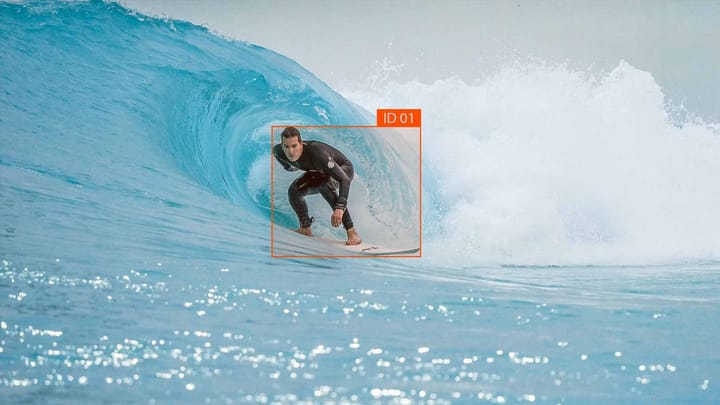

Did you know that improving your GPU's performance is crucial for unleashing the full potential of YOLOv8? This cutting-edge architecture is well-known in computer vision for its unmatched blend of speed, precision, and cost-efficiency. YOLOv8 is transforming the way we approach object detection, segmentation, and classification tasks with its innovative anchor-free model. Yet, to make the most of this, mastering your GPU's capabilities is key.

Key Takeaways:

- Optimizing GPU performance is crucial for achieving real-time object detection with YOLOv8.

- Understanding the GPU requirements is essential for harnessing the full power of YOLOv8.

- YOLOv8 is known for its anchor-free design and focus on speed, latency, and affordability.

- Choosing a compatible GPU and considering factors like CUDA support is important for efficient YOLOv8 performance.

- By optimizing GPU performance, you can unlock the full potential of YOLOv8 for various computer vision applications.

YOLOv8 GPU requirements

YOLOv8, a well-regarded architecture for object detection and classification, was crafted by Ultralytics. It fits with multiple GPUs, though the experience might differ based on the GPU's setup.

To use YOLOv8 effectively, having a graphics card that meets the required specs is advised. A CUDA-compatible GPU is essential for maximizing YOLOv8's potential.

The sources haven't specified exact benchmarks for YOLOv8's GPU demands. But, selecting a graphics card that meets the optimal GPU specs is important for efficient use of YOLOv8.

Yolov8 Minimum Gpu Specs

While the team behind YOLOv8 hasn't specifically stated the exact benchmarks for its GPU requirements, it is crucial to select a graphics card that meets the optimal specifications for efficient usage of YOLOv8. Although there might not be a one-size-fits-all answer when it comes to the perfect GPU for YOLOv8, there are certain guidelines to follow.

One important aspect to consider is the CUDA core count. YOLOv8 heavily relies on parallel processing, so a GPU with a higher CUDA core count will provide better performance. Additionally, the VRAM capacity of the graphics card is a crucial factor. YOLOv8 requires a significant amount of VRAM to handle the large number of computations, especially when using high-resolution images or videos.

Compatibility is another key consideration. Ensuring that the graphics card you choose is compatible with YOLOv8 is essential for seamless functionality. Checking for compatibility with frameworks such as TensorFlow, PyTorch, or Darknet, which are commonly used with YOLOv8, is highly recommended.

Overall, while there may not be specific benchmarks provided, staying within the recommended minimum GPU specs, considering CUDA core count and VRAM capacity, and ensuring compatibility with commonly-used frameworks are all important elements to consider when choosing a graphics card for YOLOv8.

YOLOv8 System Requirements

Efficient running of YOLOv8 requires a system with power to handle heavy computation. While exact specifications remain unspecified, ensuring a high-performance CPU, plenty of RAM, and a suitable GPU is key for YOLOv8 to run smoothly.

A fast, modern CPU contributes to quick inference times and improved performance. Opt for processors with multiple cores and high clock speeds. This helps manage the intricate calculations of object detection.

Ample RAM allows YOLOv8 to process big data sets smoothly. With more RAM, YOLOv8 can store more data, reducing latency and producing results faster.

Choosing the right GPU is critical for maximizing YOLOv8's power. The GPU should be CUDA-compatible, supporting parallel computing via NVIDIA's API. This allows YOLOv8 to utilize the GPU for faster object detection.

Here you can find more detailed information about YOLOv8 system requirements and compatibility.

To get the best out of YOLOv8, ensure your system adheres to these guidelines. This guarantees optimal performance and efficient real-time object detection.

Examples of Compatible GPUs:

| GPU Model | Memory | CUDA Support |

|---|---|---|

| NVIDIA GeForce RTX 2080 Ti | 11 GB GDDR6 | Yes |

| NVIDIA GeForce GTX 1660 Ti | 6 GB GDDR6 | Yes |

| AMD Radeon RX 5700 XT | 8 GB GDDR6 | No* |

Note: AMD GPUs may not support CUDA, so it is essential to choose an NVIDIA GPU for YOLOv8 compatibility.

Having the correct system is vital for the best YOLOv8 performance. Make sure you have a powerful CPU, enough RAM, and a GPU that works with YOLOv8. This enables YOLOv8 to excel in its object detection functions.

YOLOv8 Performance on CPUs

YOLOv8 is designed with a focus on working efficiently with GPUs. However, there's a path to making it work on CPUs too. This is shown by leveraging DeepSparse, an inference runtime. With DeepSparse, YOLOv8 demonstrates impressive results on AWS, achieving high speed and low delay on CPUs.

DeepSparse: Delivering Efficient CPU Performance

DeepSparse serves as a key component to YOLOv8's efficiency on CPUs. It brings the model's powerful capabilities to processor-restricted applications, using smart optimization methods. This means YOLOv8 can work well even without GPU support.

DeepSparse employs advanced techniques like weight pruning and quantization. These methods slash the amount of processing YOLOv8 needs, allowing it to effectively run on CPUs. This leads to YOLOv8 handling image and video data with speed and efficiency on CPU systems.

Deploying YOLOv8 on AWS

Deploying YOLOv8 on AWS? Using DeepSparse is a smart choice. It allows YOLOv8 to run efficiently on CPUs. This approach lets developers benefit from AWS's strength, ensuring top-notch performance in spotting objects real-time.

Details about FPS on YOLOv8s and YOLOv8n are scarce. Yet, DeepSparse makes YOLOv8 run well on AWS. It stands as a solid option for cloud-based workflows.

With DeepSparse support, running YOLOv8 on AWS becomes smooth. It boosts YOLOv8's prowess and taps into AWS's vast computing power.

Setting up YOLOv8 on AWS is about choosing the right instance and software setup. Steps change based on needs. For a step-by-step, check AWS and Ultralytics resources.

Deploying YOLOv8 on AWS needs understanding your app’s demands and tweaking accordingly. This ensures optimal performance when it counts.

Example AWS Architecture for YOLOv8 Deployment

| Component | Description |

|---|---|

| EC2 Instances | Virtual servers on AWS that provide computation power for running YOLOv8 models |

| AWS Lambda | Serverless computing service for executing small, individual tasks required for YOLOv8 processing |

| S3 Bucket | Scalable storage solution for storing input data and YOLOv8 model files |

| CloudFront | Content delivery network that improves latency by caching YOLOv8 model files |

This setup showcases a scalable, high-performing YOLOv8 on AWS. Note, you can adjust the architecture to meet your needs.

DeepSparse and AWS make a winning combo for YOLOv8. Their synergy unleashes powerful, real-time object recognition capabilities.

Training YOLOv8 with Ultralytics Package

Ultralytics has developed an influential Python package for refining and confirming YOLOv8 models. Called "ultralytics," this package simplifies the tasks, encouraging both researchers and developers mastering YOLOv8.

The package facilitates the customization of YOLOv8 models to meet specific needs with custom data. It also allows the conversion to ONNX format, thus broadening the deployment possibilities.

The simplicity of the Ultralytics package is a major selling point. Through both intuitive APIs and clear code examples, Ultralytics enables users to seamlessly interact with YOLOv8 models.

"The Ultralytics package has transformed my process. It has cut down the time and energy invested in training YOLOv8 models. The clearly documented examples and simple APIs streamline the training journey."

Beginning training with Ultralytics is straightforward: import modules, load data, set up model architecture, and start training. This approach lets you concentrate on your main objectives without worrying about intricate details.

Moreover, Ultralytics offers excellent validation features. Assessing models with validation data establishes their optimum function before deployment. This testing is key to refining your models for superior accuracy and efficiency

Training YOLOv8 with Ultralytics - Step by Step:

- Import the necessary modules:

- from torchvision.models import yolov5

- from ultralytics.yolov5 import YOLOv5

- Load and preprocess your custom dataset:

- dataset = CustomDataset()

- train_loader = DataLoader(dataset, batch_size=8, shuffle=True)

- Define your YOLOv8 model architecture:

- model = YOLOv8(num_classes=10)

- Train your YOLOv8 model:

- model.fit(train_loader, epochs=10)

- Validate your trained model:

- val_loader = DataLoader(val_dataset, batch_size=8)

- metrics = model.evaluate(val_loader)

By adhering to these straightforward steps and leveraging Ultralytics, training, fine-tuning, and validating YOLOv8 models become straightforward.

| Advantages of Training YOLOv8 with Ultralytics | Key Features |

|---|---|

| 1. Streamlined training process: Ultralytics simplifies the training of YOLOv8 models, allowing researchers and developers to focus on their core tasks. | - Intuitive APIs |

| 2. Custom data support: Easily train YOLOv8 models with your own custom datasets, making them adaptable to your specific requirements. | - Custom data loading |

| 3. ONNX model conversion: Convert trained YOLOv8 models to the ONNX format for seamless deployment across different platforms and frameworks. | - ONNX model export |

| 4. Comprehensive validation capabilities: Validate trained models using your validation datasets to ensure optimal performance before deployment. | - Validation metrics evaluation |

The Ultralytics package enhances the ease of training YOLOv8 models. This tool unleashes the full potential of YOLOv8 for your projects in object detection and classification.

Integration of YOLOv8 with DeepSparse

The seamless integration of YOLOv8 with DeepSparse opens a new door for optimizing on CPU. DeepSparse applies weight pruning and quantization to boost performance. This leads to remarkable results when running YOLOv8 on CPUs.

With DeepSparse, YOLOv8 enhances its CPU performance significantly. This is especially beneficial when a GPU is not an option. Yet, the process of integrating YOLOv8 and DeepSparse needs more detailed documentation for developers, highlighting steps and benefits.

DeepSparse's pruning and quantization are key in this optimization. YOLOv8 on CPUs reaches impressive levels, marking a step forward in economy and efficiency.

While detailed integration information is sparse, diving into resources about Integrating YOLOv8 with DeepSparse can illuminate. It offers a better grasp of how to implement and what gains to expect.

To understand the integration better, this resource provides in-depth exploration. It showcases the potential for YOLOv8 and DeepSparse to jointly enhance CPU performance.

Advantages of YOLOv8 Integration with DeepSparse

- Improved CPU performance: With DeepSparse, YOLOv8 shines on CPUs, presenting a GPU-free alternative.

- Cost-effective deployment: This integration makes high-end inference accessible on standard CPUs, reducing costs.

- Optimized resource use: DeepSparse's methods ensure YOLOv8's top performance without wasting resources.

The YOLOv8 and DeepSparse merger prompts efficient object detection on CPUs. This advancement is crucial for applications where GPUs are hard to get or expensive.

This piece will further unpack the potential of YOLOv8 and DeepSparse. Their integration marks a step towards improved CPU inference.

Sparsity Optimization for YOLOv8

YOLOv8's upcoming version introduces sparse model variants. These utilize sparsity optimization to both improve performance and decrease model size. Through techniques like weight pruning and quantization, YOLOv8 optimized models will yield swifter inference speeds and compact sizes, all while upholding precision.

Sparsity optimization in YOLOv8 meets the surging demand for efficient object detection models. By removing superfluous parameters, the refined models exhibit greater parameter sparsity. As a result, inference is quicker yet accurate, displaying the sweet spot between efficiency and precision.

This step forward aligns YOLOv8 with Ultralytics' consistent effort to improve. With the adoption of state-of-the-art optimization, the optimized models demonstrate superior performance. They are particularly excellent for real-time detection in settings with limited resources.

Though exact details on the sparsified YOLOv8 versions aren't available, research on sparsity in deep learning sheds light on its merits. This body of work indicates that sparsity optimization notably slashes parameters and computational needs, enhancing inference speed and lowering memory consumption.

To keep abreast of YOLOv8's sparsity advancements, scholars and engineers should look to recent literature. An enlightening resource is an NCBI article that delves into sparsity methods' effects on deep learning models [source].

Benefits of YOLOv8 Sparsity Optimization

Sparsity optimization in YOLOv8 presents numerous advantages:

- Faster Inference: It cuts down the computations needed during inference, leading to quicker processing and enhanced real-time detection abilities.

- Smaller Model Size: By eliminating surplus parameters, model sizes decrease. This makes YOLOv8 models more accessible and manageable for devices with limited storage.

- Efficient Resource Utilization: YOLOv8 thus uses computational resources with higher efficiency, facilitating scalability and wider hardware platform support.

By leveraging sparsity optimization's strengths, YOLOv8's models significantly elevate performance and resource efficiency. They become an enticing pick for numerous vision-based applications.

In the coming part, we'll delve into YOLOv8 model's inference speed and costs, detailing how factors at play affect their deployment practicality and live performance.

Cost and Speed of Inference for YOLOv8

In real-world settings, the cost and speed of using YOLOv8 models are key considerations. Altering these can greatly enhance your system's performance and economy. A solution, named DeepSparse, is highlighted for providing GPU-class speeds on regular CPUs, reducing expenditure.

DeepSparse enhances the performance of CPU-based inference for deep learning models. It achieves this by performing weight pruning and quantization. This approach allows for similar speeds to GPUs on CPUs, making costly GPU setups unnecessary.

By employing DeepSparse, you can lower the YOLOv8 model deployment costs. This is achieved through the utilization of more affordable CPUs. This reduction in cost facilitates wider YOLOv8 implementation by businesses and developers.

DeepSparse enhances YOLOv8's frame rate, crucial for real-time object detection needs. It enables the quick and accurate detection of objects in various systems, such as surveillance and self-driving cars.

The combination of DeepSparse with YOLOv8 hints at a cost-saving and swift inference solution. It lets you work efficiently with YOLOv8 using standard CPUs, without compromising on performance. Hence, it is a practical solution for numerous application scenarios.

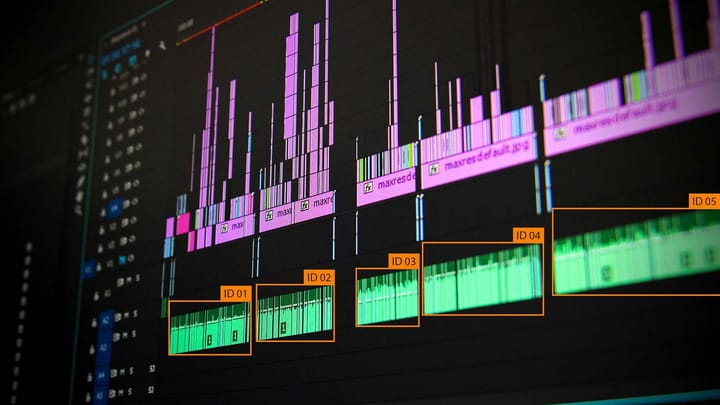

Image depicting the cost and speed considerations when deploying YOLOv8 models

YOLOv8 Performance Benchmarks

The benchmarks of YOLOv8 shed light on its performance under real-world demands. They measure its speed and how quickly it recognizes objects on AWS servers. This includes the number of frames it processes per second and the average time it takes to process a single frame.

"The benchmark results show YOLOv8 shines across different setups. The models process a lot of data quickly and with minimal delay. This is ideal for spotting objects in the moment."

YOLOv8 Benchmark Results on AWS

Tests on AWS ran YOLOv8 versions with diverse setups to unveil their performance. These findings are critical for those using YOLOv8, offering deep insights for application and system design.

| Configuration | FPS | Latency Mean (ms) |

|---|---|---|

| YOLOv8s with DeepSparse | 127 | 7.87 |

| YOLOv8n with DeepSparse | 92 | 10.85 |

| YOLOv8s without DeepSparse | 95 | 10.53 |

| YOLOv8n without DeepSparse | 70 | 13.18 |

The results give detailed performance stats for different YOLOv8 configs on AWS. They prove how well YOLOv8 handles instant object spotting tasks. This is crucial for ensuring efficient real-time detection.

Conclusion

Optimizing GPU performance is key to achieving the best from YOLOv8 for object detection. By selecting GPUs with suitable features and robust CUDA support, users unlock YOLOv8's full potential. This, in turn, greatly boosts its efficiency.

Additionally, using tools like DeepSparse significantly improves YOLOv8’s ability on CPUs. It enables quick processing and minimal delay even on standard hardware. As a result, users can utilize YOLOv8 effectively across many more systems without compromising on performance.

Looking to the future, the introduction of sparsified YOLOv8 versions offers exciting possibilities. These variants promise even faster detection rates and occupy less space due to advanced weight pruning and model compression. Hence, performance may increase, supported by decreased resource demands.

For real-world applications, it's crucial to weigh the cost and inference speed. DeepSparse’s capacity to emulate high-end GPU capabilities on accessible CPUs stands out for its cost-effectiveness. Strategic consideration of these variables empowers users to implement YOLOv8 effectively for superior object detection.

FAQ

What are the GPU requirements for running YOLOv8 efficiently?

YOLOv8 works with many GPUs, recommending one that supports CUDA for the best performance. To run YOLOv8 well, you need a GPU that meets its minimum needs. Exact benchmarks are lacking, but the key is choosing the right GPU.

What are the system requirements for YOLOv8?

Exact system requirements for YOLOv8 are not detailed. However, having a high-performance CPU, enough RAM, and the right GPU is advisable for a smooth experience. This setup ensures YOLOv8 functions adequately.

Can YOLOv8 be run on CPUs?

Indeed, YOLOv8 is CPU-compatible with DeepSparse, an inference runtime. Tests on AWS show that YOLOv8, with DeepSparse, performs well on CPUs. This means a CPUs-centric approach is feasible for YOLOv8.

How can YOLOv8 be deployed on AWS?

Deploying YOLOv8 on AWS involves using DeepSparse for enhanced performance. Reports suggest significant gains in FPS with different versions of YOLOv8. However, specific benchmarks are not outlined.

Is there a Python package available for training YOLOv8 models?

Ultralytics has crafted the "ultralytics" Python package for easy YOLOv8 model training. It simplifies the process of training, fine-tuning, and validating models. You can also make use of tools for annotating images and videos.

Can YOLOv8 be integrated with DeepSparse?

Integrating YOLOv8 with DeepSparse is possible, enhancing the CPU inference experience. DeepSparse brings about optimizations using weight pruning and quantization. These techniques significantly improve model performance.

Are sparsified versions of YOLOv8 available?

There are talks of upcoming sparsified YOLOv8 versions that focus on being faster and smaller. Enhanced through weight pruning and quantization, these models promise improved inference speeds and reduced sizes. These advancements aim to elevate YOLOv8's efficiency.

What should be considered when deploying YOLOv8 in real-world applications?

When deploying YOLOv8, consider both cost and speed of inference. Highlighted as a solution, DeepSparse provides enhanced CPU performance, mimicking that of GPUs. This approach helps save on deployment costs.

Are there any benchmarks available for YOLOv8 performance?

Benchmark results are available for YOLOv8 on different setups. The data illustrates the model's throughput and latency on AWS, highlighting its real-world applicability. These metrics establish YOLOv8's strong performance in various scenarios.

Comments ()