Metaverse Content Creation and Annotation

The Metaverse is not just another VR/AR game or a set of static 3D modeling scenes. It can already be called an interconnected digital world that must be intelligent and interactive. Every element, object, avatar, gesture, and environment must have a clear, machine-readable meaning for this world to function autonomously. Thus, meta-annotation transforms passive digital assets into intelligent, interactive entities, making a true, autonomous Metaverse possible.

Currently, it is even moving beyond gaming and slowly flowing into the realms of commerce, education, and economics. Major brands are already creating virtual stores, and universities are building digital campuses. Global investment trends show different priorities. China focuses on industrial applications, while the US bets on social infrastructure.

Key Takeaways

- Annotation explains that a VR/AR asset is a "chair" that can be sat on, lifted, and has collision properties.

- Everything a human sees as a complete scene is, for AI, a set of objects, their properties, coordinates, and potential interactions.

- High-quality annotation allows AI agents to react meaningfully.

Content Creation Methods and Tools

Creating billions of VR/AR assets for the Metaverse requires combining time-tested 3D techniques with revolutionary methods of automation. The speed of content production is crucial, which is why the industry is actively shifting from purely manual labor to hybrid, AI-centric pipelines.

Manual Creation

This is the foundation upon which the entire digital world is built, yet it is the slowest method. Professional 3D artists and developers manually create detailed models, textures, and animations. The dominant tools for creation include powerful editors like Blender or Autodesk Maya.

While the quality of this content is the highest, the process is expensive and does not scale to the needs of a dynamic Metaverse.

Generative Creation

This is the most innovative direction, promising to unlock the true speed of content creation. It involves using AI models to automatically create 3D modeling objects or textures from simple textual or visual prompts.

Key Technologies:

- Text-to-3D. Models that can generate a complete three-dimensional model of an object from just a description.

- Image-to-3D. Creating a 3D model based on one or more photographs.

This significantly accelerates the creation of draft versions of objects and textures, turning artists from "builders" into "editors" of AI-generated content.

Photogrammetry and Scanning

This method is the bridge between the real and virtual worlds. It involves converting objects, people, or entire environments from the real world into virtual VR/AR assets by collecting a large number of images from different angles or using specialized 3D scanners.

It is ideal for quickly importing real buildings, furniture, or museum exhibits into the Metaverse, creating highly realistic, immersive data environments.

Standards and Compatibility

Regardless of the creation method, the content must "work" everywhere. For the Metaverse to be unified rather than a collection of isolated games, VR/AR assets must be compatible across different platforms like Roblox, Meta, or Microsoft.

This problem is solved using universal, open formats such as Universal Scene Description, developed by Pixar, and Graphics Language Transmission Format. These standards ensure that geometry, textures, and basic animations are displayed correctly in any virtual environment.

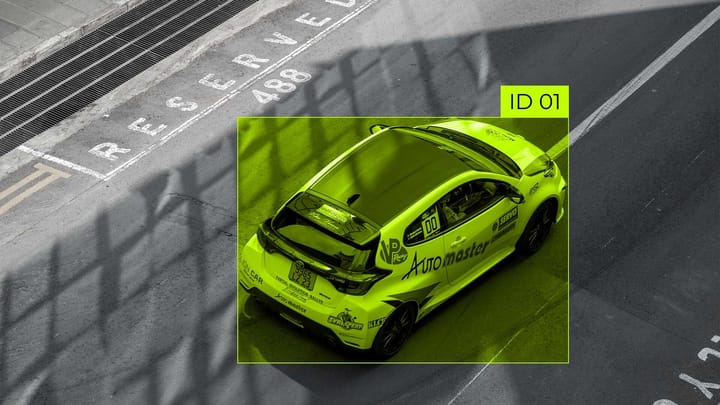

Content Annotation for Adding "Intelligence"

For the human user, a chair is just a chair. But for the artificial intelligence systems that manage the simulation, the "scene" is a set of raw data. Detailed 3D content annotation is needed for robots, avatars, non-player characters, and systems to understand what they are seeing.

This stage is critically important for transforming static VR/AR assets into functional elements of a true Metaverse. Here, annotation moves beyond simple metadata and becomes a tool for giving objects "intelligence" and ensuring their interactivity.

Semantic Annotation

Annotation for the Metaverse is more than just a "chair" label. It involves adding deep semantic information about an object, its purpose, behavior, and interrelationships. If traditional metadata describes what it is, semantic annotation describes how it works and what it is for.

A chair, as a 3D modeling object, receives functional tags such as:

- Function: [Can_be_sat_on], [Can_be_moved].

- Physical Properties: [Weight: Medium], [Stability: High].

- Interaction: [Has_Collision: True], [Material: Wood].

As a result, this object becomes a source of immersive data with which systems can interact meaningfully.

Necessity for Artificial Intelligence

High-quality annotated content fuels the intelligent systems of the Metaverse. Annotated content allows smart agents to interact with objects just as a human does. For example, an AI agent will not put a cup on the floor because it understands that the "table" function is [Surface_for_placement].

Systems can dynamically adapt to the environment. If a user has the tag [Requires_wheelchair], the system can replace all [Can_be_sat_on] objects with appropriate VR/AR assets.

Annotation Methods

Traditional methods do not account for moving objects or behavioral patterns. Newer approaches apply multi-layered descriptors that describe physics, materials, and interactions.

The future will likely lie in a hybrid approach, where AI performs the initial, fast annotation, and a human, in a human-in-the-loop mode, only verifies and corrects critical tags.

Challenges and Future Perspectives of the Metaverse

Despite innovations in content creation and annotation, a fully realized Metaverse faces several fundamental challenges that will shape the future direction of technology development.

Scalability

The main technological challenge is the need for a massive volume of unique, high-quality, and, most importantly, annotated content. For a truly living world experience, the Metaverse needs billions of unique VR/AR assets. Manual 3D modeling and manual annotation cannot meet this demand.

The future depends on Generative AI. Models are needed that can automatically generate content and then automatically overlay semantic tags and immersive data onto the created objects, minimizing human intervention.

Seamless "Real-Virtual" Pipeline

To enhance the realism and utility of the Metaverse, a perfect two-way data flow must be established. This involves creating tools that allow ordinary users to quickly and accurately digitize real objects and environments using smartphones.

The most interesting aspect for business now is the ability to use 3D modeling content created in the Metaverse for direct production in the real world via 3D printing or CNC machines.

Democratization of Creation

The true potential of the Metaverse will be unleashed when content can be created by millions of users, not just professional studios. To achieve this, 3D modeling and annotation tools must be made so simple and intuitive that an average user can create a quality object in minutes. For example, using voice commands or simple editing of AI-generated models.

Establishing effective monetization mechanisms is essential to encourage these millions of users to continually enrich the Metaverse with new, high-quality, and annotated content.

The real power of this technology lies in contextual adaptability. Field technicians gain access to equipment diagrams through AR glasses, and architects can visualize structural changes during a tour with a client. With advancements in hardware and increased AI sensitivity, such mixed environments will radically change approaches to solving complex problems.

FAQ

What is an “intelligent” Metaverse and why can’t simple 3D models function in it?

An intelligent Metaverse is an interconnected digital world where objects, avatars, and environments are interactive and autonomous. Simple 3D models are passive: they only have geometry and textures. For the Metaverse to function, each object needs a machine-readable meaning.

How is semantic annotation different from regular metadata, and how does it give an object “intelligence”?

Regular metadata simply describes what it is. Semantic annotation describes how it works and what it is for. It adds functional tags. These tags transform a static VR/AR object into a functional entity that AI agents can interact with in a meaningful way.

How does high-quality annotation prevent an AI agent from dropping a cup?

Well-annotated content gives the AI agent contextual adaptability. The agent doesn’t just see the object “table,” it understands its function: [Surface_for_placement]. Thanks to this logical tag, the AI agent knows that the cup should be placed on the table, not on the floor, since the floor doesn’t have this function.

What universal standards ensure compatibility of VR/AR objects across platforms?

In order for the Metaverse to be a single world, not a set of isolated games, content must be unified. This is solved using open formats such as Universal Scene Description, developed by Pixar, and glTF. These standards ensure that geometry, textures, and basic animations are displayed correctly on any platform.

What is the “Real-Virtual” Pipeline, and how does it relate to 3D printing and manufacturing?

The Real-Virtual Pipeline is a two-way data exchange between the real and virtual worlds. On the one hand, it allows for the rapid digitization of real-world objects into the virtual world. On the other hand, it allows for the use of 3D models created in the Metaverse for direct production in the real world, for example, via 3D printing or CNC machines.

Comments ()