Labeling High-Resolution Radar Point Clouds for AV

While cameras depend on lighting and LiDAR loses effectiveness due to beam scattering in water droplets or steam particles, high-resolution radars ensure stable operation in the most difficult meteorological conditions. The ability of radio waves to pass through fog, rain, and snow makes this sensor indispensable for safe movement in conditions where other types of sensors go blind.

The main value of a modern radar lies in its ability to generate a unique type of data – a point cloud containing information about the instantaneous Doppler velocity of every object. Unlike static images, a radar forms a four-dimensional picture of the world, where each point has coordinates and a relative velocity vector. This allows the autopilot system to quickly distinguish moving cars from static obstacles, even if they are partially blocked by other objects.

However, the transition to high resolution creates a new challenge: the radar point cloud becomes much denser and more difficult for a human to perceive. Instead of a few reflection points, developers receive detailed but noisy data arrays that require pinpoint labeling. It is the high-quality annotation of this specific data that becomes the foundation upon which an autonomous car's ability to make correct decisions in road situations is built.

Quick Takes

- Radar is a sensor that maintains stability in fog, heavy rain, or snowfall, where cameras and LiDARs become ineffective.

- Unlike other sensors, radar adds the instantaneous Doppler velocity of each point to its coordinates.

- High resolution creates dense but noisy point clouds that are difficult for a human to interpret without the help of cameras.

- Radar labeling is almost impossible without overlaying LiDAR or video data to confirm the reality of objects.

- Using LiDAR as a "teacher" allows for faster radar labeling.

Specifics of Radar Data Labeling

Working with high-resolution radars requires a special approach due to the unique physical nature of the signal. Understanding the structure of this data allows for the proper organization of dataset preparation for autonomous driving systems.

Characteristics of Radar Point Clouds

The main feature of such data is sparsity, as radar point clouds contain significantly fewer points compared to LiDAR. This means a car might not look like a clear 3D model, but like a scattered group of a few dozen points.

Another important trait is the presence of noise and multipath effects. Radio waves often reflect off metal fences or asphalt surfaces, creating "ghost" objects where none exist. However, the key advantage is Doppler velocity – the radar's ability to instantly measure the speed of each individual point. This makes it possible to distinguish objects not only by their shape but also by the nature of their movement, which is vital for road safety.

Main Approaches to Data Labeling

The choice of annotation method depends directly on the task that the radar-only perception system needs to solve. Since 4D radar data contains many dimensions, labeling can happen at different levels of detail:

- Object Labeling. Creating 3D bounding boxes around point clusters to determine the dimensions and orientation of vehicles.

- Semantic Segmentation. Assigning each point an individual class, such as car, pedestrian, or static fence.

- Velocity Annotation. Checking and refining Doppler values for each object so the model correctly understands the direction of movement.

- Temporal Tracking. Combining consecutive frames into single tracks to follow the movement trajectory of objects over time.

These types of annotations help algorithms better interpret complex scenes. If the task is emergency braking, accurate point classification is the priority. If the system is planning an overtaking maneuver, temporal tracking and the exact speed of neighboring cars play a more important role.

Sensor Cooperation in Labeling Workflows

Since radar data itself is quite abstract, high-quality processing is impossible without the context provided by other sensors and specialized software.

Combining Radar with Other Sensors

The process of labeling radar data is almost always based on the concept of sensor fusion. Because individual radar points are hard to identify visually, annotators use camera images and dense LiDAR point clouds as reference layers. The camera provides an understanding of the object's color and type, while LiDAR allows for the exact determination of its boundaries in space. Overlaying this data on radar points helps confirm that a specific signal spike is actually a car and not a ghost reflection from a metal structure.

But this approach creates serious synchronization challenges. Each sensor has its own update frequency and works on its own internal clock, so the smallest delay causes radar points to shift relative to the car's real position on video. Additionally, geometric difficulties arise because all sensors are located at different points on the vehicle body. For accurate annotation, calibration is necessary so that each radar beam corresponds to a specific pixel on the camera.

Tools and Workflows for Labeling

The practical process of annotating 4D radar data requires tools that far exceed ordinary photo editors. The working environment should support the simultaneous rendering of multiple windows with different data streams, where changes in one window are instantly reflected in the others.

A typical workflow begins with automatic pre-labeling, where LiDAR-based algorithms suggest initial bounding boxes. Then, an annotator checks these suggestions, using Doppler parameters to confirm speed. Special attention is paid to multi-sensor scenes where the specialist must manually adjust object tracks over time, ensuring the car's ID remains consistent throughout the trip. This allows you to create a reliable dataset that will teach the drone to understand not only where obstacles are, but also how they will move in the next second.

Ensuring Reliability and Impact on AI

The quality of radar data preparation directly determines how confidently a car feels on the road in low visibility. Any inaccuracy in the markup can lead to incorrect algorithm decisions, so the validation and impact analysis processes are critically important.

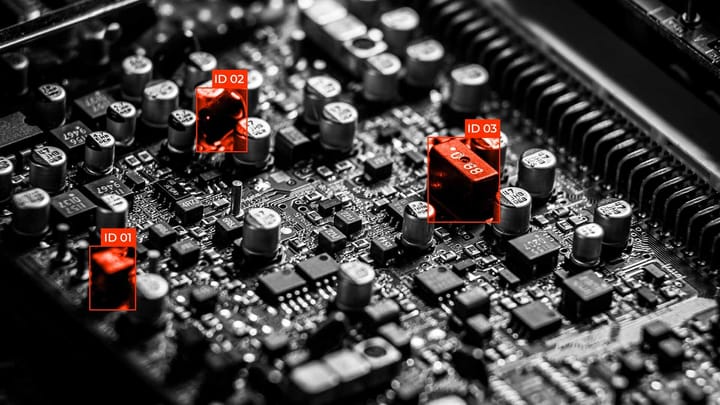

Quality Control of Radar Annotations

Errors in labeling radar point clouds have a much higher cost than in regular video analytics. If an annotator mistakenly classifies a static reflection from a metal bridge as a stopped car, it could cause phantom braking on the highway. Quality control is based on three principles:

- Temporal Consistency. Ensuring the object doesn't disappear or change properties between frames.

- Sensor Consistency. Checking that radar points do not fall outside the boundaries of the object captured by the camera or LiDAR.

- Velocity Validation. Automatically compares the labeled movement vector with the Doppler velocity reading to find human errors.

Quality assurance specialists use automated inspection tools that highlight anomalies where radar data violates the laws of physics, allowing them to correct frame shifts or misclassifications before the data enters the model training process.

Impact of Labeling Quality on AV Models

When a model is trained on high-quality point clouds, it distinguishes real objects much better during heavy rain or snow. Properly labeled velocities allow the model to predict the trajectory of other road users with centimeter precision, which is critical for high-speed maneuvers.

Properly labeled data also increases the reliability of the detection and tracking system. Instead of perceiving each group of points as a separate obstacle, the model learns to combine them into coherent objects with clearly defined boundaries. This creates a reliable layer of insurance for the drone: even if the camera is blinded by the sun, a properly trained radar module will provide complete information about the surrounding environment, ensuring the safety of passengers and pedestrians.

Labeling Challenges and Automation as the Solution

Even with the best tools, the process of annotating radar data is full of physical nuances. Understanding the pitfalls allows developers to create more resilient systems.

Complex Cases and Physical Limits

- Resolution Problem. When two cars move close together at the same speed, the radar may see them as one extra-long object. The annotator must use camera data to understand where to "cut" this single blob into two separate boxes.

- Materials and Reflections. Metal structures like guardrails or trucks create extremely bright and numerous points that blind the sensor. Against their background, pedestrians look barely visible as single points because the human body reflects radio waves poorly.

- Ghost Objects. Multiple signal reflections from the asphalt under a large truck can create a "mirror" object underground or inside the machine itself, confusing tracking algorithms.

The Role of Automation

Labeling radar data purely by hand is an extremely difficult task. Therefore, modern teams use an auto-labeling strategy where auxiliary algorithms do the bulk of the work.

The most popular method is using trained LiDAR-based models to generate initial boxes and classes for the radar. Since LiDAR sees the shape of an object much more clearly, it acts as a teacher.

Additionally, automatic physics validation is actively used: for example, if a point is classified as a static tree but has a Doppler velocity of 100 km/h, the system will automatically flag this sample as an error. This approach allows the human annotator to simply check and correct AI results, speeding up the process tenfold.

FAQ

Why does the radar sometimes not see a stationary concrete block on the road?

This is due to the filtering of static objects. To avoid false alarms on every soda can or manhole cover, algorithms often ignore points with zero Doppler velocity, making the semantic segmentation of static obstacles a difficult task.

How does the radar's tilt angle affect the quality of the point cloud during labeling?

Radars are very sensitive to the angle of the beam: if an object's surface is at a sharp angle, the signal may simply not return to the sensor. In the annotation, this looks like part of the car disappearing.

How do micro-Doppler effects help distinguish a pedestrian from a cyclist?

Moving parts of an object create additional velocity fluctuations. These micro-signals allow annotators and models to accurately classify living objects even if their point cloud is very sparse.

What role does vertical resolution play in modern 4D radars?

Old radars were "flat" and saw the world in 2D, unable to tell a car under a bridge from the bridge itself. Modern 4D radars add a height coordinate, which is vital for annotation as it allows for separating objects in different vertical planes and avoiding phantom braking.

Comments ()