Comparing Top Data Annotation Tools 2026: Features, Pricing & Use Cases

In modern AI and machine learning research, the quality of training data is critical to the efficiency and accuracy of models. Choosing the right annotation tool determines a team’s ability to handle large amounts of data, maintain quality standards, and integrate results with existing ML processes.

This review systematically compares the leading data annotation tools in 2026. The assessment covers key platform functionalities, configuration flexibility, support for collaborative processes, integration with ML ecosystems, and pricing structures.

Key Takeaways

- Pricing structures vary significantly between enterprise and open-source solutions.

- Integration capabilities can streamline your entire machine learning workflow.

- Security certifications are crucial for enterprise-grade applications.

- The right choice depends on team size, budget, and technical requirements.

Understanding the Role of Data Annotation in AI

Data annotation is a fundamental process in the development of AI and machine learning models. The quality and consistency of annotations directly affect model performance, determining accuracy and reliability.

Annotation platform comparison allows you to systematically evaluate available solutions, taking into account functionality, scalability, collaboration support, and integration with existing ML processes. The use of clearly defined tool selection criteria helps organizations choose a platform that meets specific project requirements, optimizes workflows, and ensures high-quality data labeling at scale.

In practice, effective data annotation creates the foundation for AI systems in various areas — from computer vision and natural language processing to speech recognition and autonomous systems.

Key Components of Modern Data Annotation Software

Data annotation companies comparison 2026

Use Cases & Pricing Models for Top Data Annotation Platforms (2026)

Tool Selection Criteria for Data Annotation Platforms

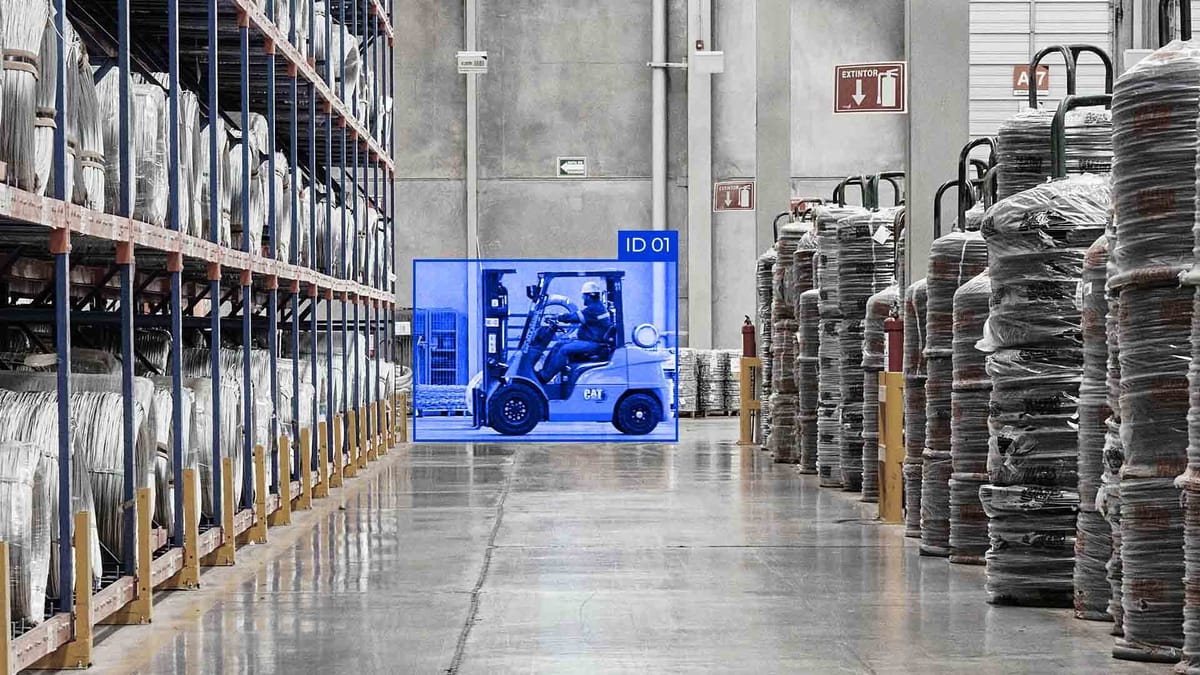

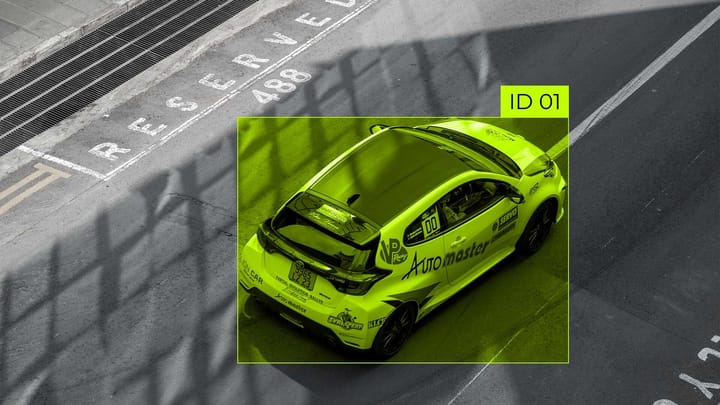

Modern platforms support various types of annotations tailored to the specifics of models and tasks. The most common include bounding boxes for object detection in images and videos, polygons and segmentation masks for precise selection of non-standard-shaped objects, landmarks/keypoints for determining the position of body parts, faces, or mechanisms, and semantic and instance segmentation for deep scene analysis. POS tags, NER, and classification are used for text data, while transcription, segmentation, and classification are used for audio. Separately, 3D point cloud and LiDAR annotations are distinguished, widely used in autonomous driving, robotics, and AR/VR, as well as video annotations, which include frame-by-frame labeling and object tracking.

To choose the optimal platform, several factors need to be evaluated. First, determine the type of data and the type of annotation needed for the project. For example, basic tools are not suitable for LiDAR or point cloud; specialized platforms such as Keylabs or Encord are needed. The scale of the project also plays an important role: large teams need platforms with support for multi-user work, cloud services, and process automation.

Modern tools increasingly offer ML-assisted annotation functions that automatically mark data for subsequent manual correction, increasing productivity and accuracy. In addition, quality control is important: platforms such as SuperAnnotate and Keylabs offer a multi-level annotation verification and review system that helps maintain high-quality datasets.

No less important is the aspect of integration. The platform should allow data to be exported in formats compatible with ML pipelines, such as COCO, YOLO, or TFRecords. Budget and payment models also play a significant role: startups and small teams usually choose platforms with free plans or pay-as-you-go models, while large enterprise projects often use custom solutions with workforce support, such as Keylabs, Scale AI, or Taskmonk. Finally, the user experience and technical support of the platform significantly affect the speed of team adaptation and work efficiency.

Given these criteria, we can formulate general recommendations for using platforms. Keylabs is noted for its strong capabilities in 3D, video, and complex computer vision projects that require ML-assisted annotation. Encord is optimally suited for multimodal data and enterprise workflows, while Labelbox and SuperAnnotate work well for medium- to large-sized teams with different types of annotation. Taskmonk and CloudFactory combine a platform with a workforce, making them optimal for large-scale enterprise projects.

Integration & Security Features Across Platforms

Most leading platforms offer APIs and SDKs that allow you to directly integrate annotation data into your company’s internal ML pipelines. Such integrations simplify data transfer between different systems, automate import and export processes, and allow you to connect automated scripts for ML-assisted annotation. The platforms support various file formats and data structures, such as COCO, YOLO, TFRecords, JSON, or CSV, making them compatible with most modern computer vision, NLP, and multi-modal solutions.

When it comes to security, leading platforms apply several layers of protection. First, they support data encryption at rest and in transit. Second, they use authentication and role-based access control (RBAC) systems that allow flexible configuration of user access to projects, tasks, and annotations. Some platforms, such as Keylabs and Encord, also offer deployment on private cloud infrastructure or on-premises, providing maximum control over data for organizations with high security requirements, such as healthcare institutions, financial companies, or the defense industry.

Other important security aspects include auditing and logging of user actions, automatic data backup, and support for compliance with international standards such as GDPR and ISO 27001. Such features ensure that data remains confidential and workflows are transparent, which is especially important for large teams and enterprise projects.

Emerging Trends: AI Assistance and Automation in Annotation

Modern data annotation platforms actively integrate AI, enabling significantly faster annotation and greater accuracy. AI-assisted annotation is a key feature for large projects, where the volume of data exceeds manual processing capabilities or when high annotation consistency is required.

The role of AI in annotation is to create pre-labeled suggestions for objects in images, videos, text, or audio, which can then be quickly checked and corrected by a human. This approach allows for reducing the annotation time, depending on the type of data and the complexity of the tasks. Most modern platforms offer various levels of automation, including integration with customer ML models for specific tasks, such as object detection, text classification, or image segmentation.

AI-assisted functions cover several key areas. First of all, this is an automatic annotation suggestion when the model pre-labels objects, and a person only checks and corrects the results. Next, active learning enables the system to select the most “complex” or ambiguous examples for manual annotation, thereby maximizing team efficiency and accelerating model training. Semi-automatic workflows are also actively used when the user starts the annotation manually, and the AI suggests completing or predicting the remaining annotation.

Automation in annotation is especially useful for multi-modal and large datasets, where simple manual labeling would be extremely laborious. For example, in video annotation or 3D point cloud data, AI helps track objects frame by frame or fill in gaps, significantly increasing speed and accuracy.

The use of AI-assisted annotation requires an assessment of the specifics of the project: for simple tasks, basic automatic suggestions can suffice, whereas for complex ones, such as medical imaging or autonomous driving, more powerful ML models and multi-level quality control are required. Additionally, integrating AI into the workflow should consider quality control and review to ensure the reliability of the markup, especially when the data will be used to train mission-critical models.

Summary

In 2026, data annotation remains a key step in preparing high-quality datasets for AI and machine learning. Choosing the right platform depends on many factors, including the type of data, the scope of the project, the need for automation, and integration with existing ML pipelines. Modern platforms support a wide range of annotations: from simple bounding boxes and text classification to segmentation, object tracking in video, and 3D point cloud data.

Leading platforms in 2026, such as Keylabs, Encord, Labelbox, SuperAnnotate, Scale AI, and others, offer different combinations of functionality, AI-assisted annotation, workflow management, and quality control.

Considering all these factors, the right choice of annotation platform is determined by a combination of project needs, data type, scale of work, automation capabilities, and security requirements. Platforms with advanced AI features, integration, and support for various types of annotations remain the optimal solution for enterprise and scalable AI projects, ensuring high-quality, efficient data preparation for AI.

FAQ

What is the role of data annotation in AI?

Data annotation provides labeled data that machine learning models require to learn patterns and make predictions. Accurate annotation ensures higher model performance and reliability in real-world applications.

What are the key components of modern data annotation platforms?

Modern platforms include multi-format support (images, video, text, audio, 3D), workflow management, collaboration tools, AI-assisted annotation, and quality control mechanisms. These components optimize efficiency and accuracy.

Which types of data annotations are commonly used?

Common types include bounding boxes, segmentation masks, keypoints, semantic/instance segmentation, text labeling, audio transcription, and 3D point cloud labeling. Each type is suited for specific AI tasks, such as computer vision or NLP.

How do AI-assisted annotation and automation improve workflows?

AI-assisted annotation provides initial labels for humans to verify or correct, reducing manual effort and increasing consistency. Automation speeds up labeling, particularly for large datasets or complex video and 3D data.

What are the main criteria for choosing a data annotation tool?

Tool selection criteria include data type, project scale, AI-assisted features, quality control, integration capabilities, security, and budget. These factors help match the platform to project requirements.

How do enterprise platforms like Keymakr and Encord stand out?

They support multimodal data, 3D annotation, advanced workflows, and ML-assisted labeling. These platforms are ideal for large-scale or complex projects requiring high precision and team collaboration.

What integration features are important in annotation platforms?

APIs, SDKs, and support for common ML data formats (COCO, YOLO, TFRecords) allow seamless connection with ML pipelines. Integration facilitates automated workflows and smooth data transfer.

How do annotation platforms ensure data security?

Platforms implement encryption at rest and in transit, role-based access control, audit logs, and compliance with GDPR and ISO standards. Some offer on-premises or private-cloud deployment for sensitive datasets.

What types of projects are best suited for managed workforce platforms?

Projects requiring high-volume, consistent labeling or enterprise-scale datasets benefit from managed workforce platforms like Taskmonk or CloudFactory. They combine human annotation expertise with platform tools for reliable quality.

Why is an annotation platform comparison important before choosing a tool?

A comparison allows teams to evaluate features, AI assistance, integration, security, and pricing. Using proper tool selection criteria ensures the chosen platform meets both technical and operational project needs.

Comments ()