Automating audiovisual annotation

Automating the annotation of audiovisual data is essential in modern AI projects, where the volume of video, audio, and multimedia materials is constantly growing. Using AI-based tools for automated labeling and AI pre-labeling allows you to quickly and accurately mark up frames, audio signals, and subtitles, reducing manual labor and minimizing errors.

This approach not only accelerates the creation of high-quality datasets for training computer vision and speech processing models, but also ensures scalability, consistency, and quality control in complex multimedia projects.

Key Takeaways

- Automated labeling, combined with human review, accelerates dataset development, ensures quality, and improves safety.

- High-quality labeling of imagery, lidar, and radar data is essential for robust models.

- Choose tools that support multimodal workflows, compliance, and team scaling. Human review in the loop reduces systemic errors in safety-critical systems.

Fundamentals of audiovisual data annotation

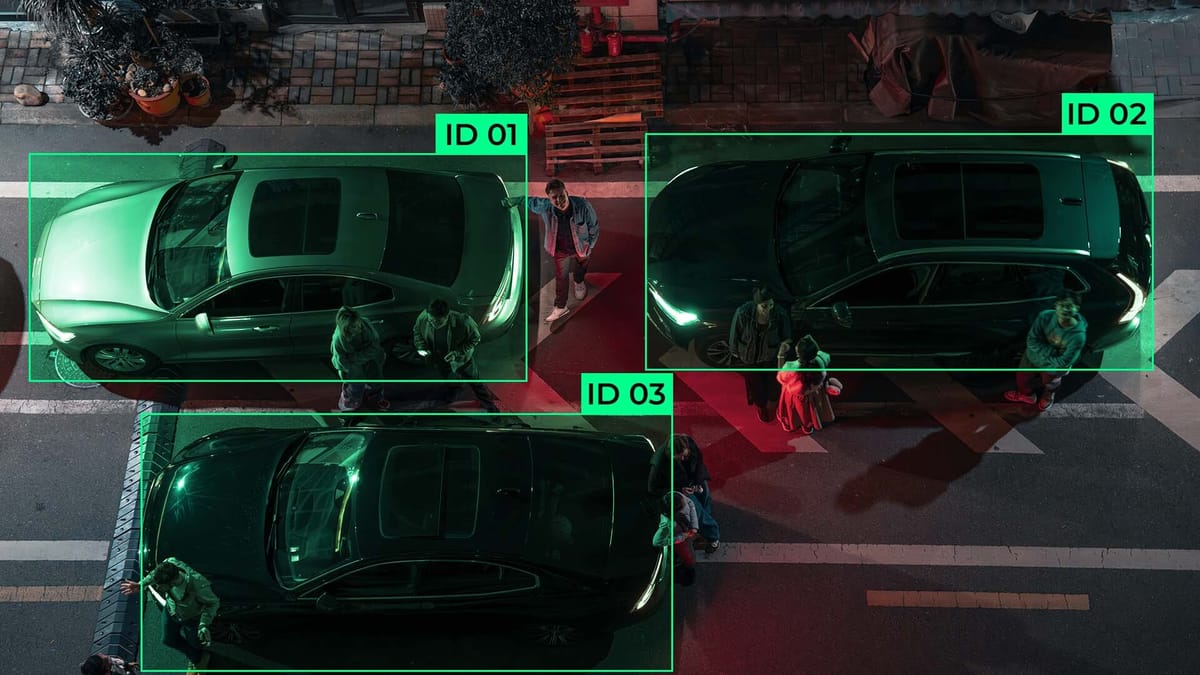

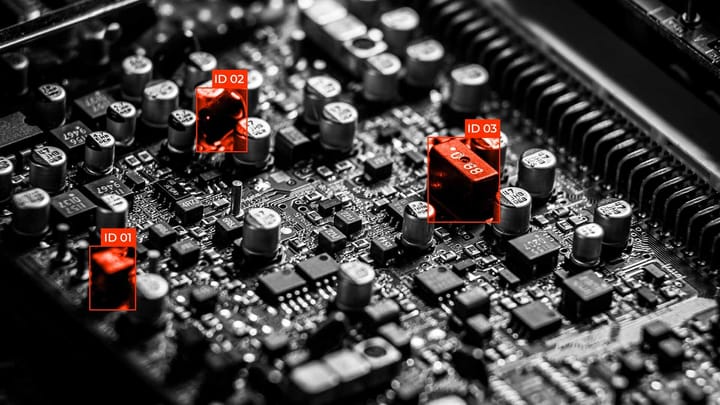

Modern computer vision, automatic speech recognition, and multimodal analysis systems are trained on labeled datasets. Cameras, microphones, lidar, and other sensors generate large volumes of audio and video streams, which themselves are arrays of pixels and sound waves. To transform this data into a basis for intelligent models, annotation is the process of adding semantic labels that explain precisely what is happening in a frame or an audio signal. This is how raw data is transformed into information that algorithms learn to recognize: objects, events, actions, intonations, and context.

Audiovisual data

It has a multimodal nature, and images and sound complement each other. In a video, these can be objects, their trajectories, or interactions between people or vehicles. In audio, these are speech, noise, emotional nuances, and background signals.

Annotation includes bounding boxes, segmentation, object tracking, speech transcription, speaker identification, sound classification, event timestamps, etc. In complex scenarios, synchronous annotation is used, where audio and video are labeled together, allowing the model to learn to establish correlations between lip movements and speech or between sound events and visual changes in the frame.

The annotation process

Typically, it combines AI pre-labeling algorithms, semi-supervised annotation, and human verification. Previous-generation models or computer vision tools can generate initial labels, which annotators then refine.

This approach increases speed optimization, scalability, and overall throughput, but requires quality control, agreed-upon instructions, and cross-annotator validation. It is essential to ensure label consistency because minor differences in how categories are interpreted affect the model's training results.

The quality of the labeled data determines the quality of the model. If the markup is inaccurate, incomplete, or biased, the system will reproduce these errors during inference.

In autonomous driving or video surveillance tasks, this can have serious consequences. Therefore, modern pipelines place great emphasis on dataset validation, class balancing, coverage of rare scenarios, and documentation of the annotation process.

AI-powered labeling and workflow automation

As data volumes and model complexity grow, companies are increasingly combining algorithmic processing with human control, creating hybrid systems that deliver scalability and consistent annotation quality. This approach reduces time spent on routine operations, minimizes human error, and maintains control over important decisions.

Pre-labeling and predictive segmentation

At this level, computer vision or natural language processing models independently create initial annotations. They highlight objects in images, segment scenes, classify documents, or identify entities in text. For example, algorithms can automatically generate bounding boxes for vehicles or perform semantic image segmentation using previously trained models.

Predictive segmentation enables the system to "guess" object boundaries and correct them in real time. This speeds up the annotator's work. Instead of creating a markup from scratch, the human only checks and refines the result.

Human-in-the-Loop

After automatic labeling, the results undergo a verification phase, during which an expert reviews complex or ambiguous cases. A human intervenes when the model shows low confidence or when objects require contextual understanding. This approach reduces the risk of accumulating errors and promotes continuous algorithmic improvement through feedback.

Human-verified data is reused to train the model further, creating a closed loop of quality improvement. The result is an adaptive system that requires less manual intervention over time.

Workflow agents and consistency

Intelligent agents automatically distribute tasks among annotators, track progress, monitor quality metrics, and initiate re-verification when inconsistencies are detected. They integrate with data management tools, CI/CD pipelines, and MLOps platforms. This ensures continuity of the process from data collection to model deployment. Consistency is achieved through standardization of instructions, automatic validation of annotation rules, and algorithmic analysis of inter-rater discrepancies. This reduces variability in results and increases confidence in training datasets.

Metrics for quality control

Quality control is an essential element of data labeling processes and dataset preparation for artificial intelligence systems. The use of standardized metrics enables you to objectively assess labeling quality, detect system errors, and maintain trust in the data at scale across large projects.

Managing large datasets, teams, and processes

In today's AI projects, the amount of information can reach millions of images, videos, or text documents, so without proper management, it is impossible to maintain high-quality annotations and team consistency. Scaling involves distributing tasks across multiple annotators and organizing processes to ensure transparency, control, and collaboration.

- An important aspect is versioning, which allows you to track changes in data and annotations, maintain a history of edits, and easily revert to previous states of datasets. This ensures the reliability and reproducibility of results, especially in projects where model retraining or error correction is underway.

- Role-based access allows you to assign user rights based on their roles within the team. Annotators can add or edit markup, managers can monitor progress and quality, and administrators can configure projects and manage resources. This approach increases data security and prevents accidental changes to critical datasets.

- The project dashboard provides centralized management of all aspects of your work. It offers quick access to analytics, statistics, and reports that help you make informed decisions, plan resources, and optimize workflows.

The platform landscape for autonomous vehicle labeling

Developing autonomous vehicles requires data labeling tools that can transform raw information from cameras, LiDAR, radar, and other sensors into structured annotations.

Due to the large volumes of video, 3D point clouds, and complexity of road situations, commercial platforms offer advanced capabilities, workflow automation, and quality control that allow teams to scale the preparation of datasets for autonomous driving.

Productivity, cost, and simulation time

Productivity, cost, and simulation time are key performance indicators for any AI and autonomous systems development process.

- Productivity measures how quickly and accurately a team or system can process large amounts of data and produce high-quality annotations for training models.

- Cost includes both direct software and infrastructure costs and indirect costs related to human time, manual data review, and error correction. Cost optimization is achieved by automating routine tasks, implementing AI-assisted tools, and distributing tasks between humans and machines.

- Simulation or model training time reflects the speed at which new algorithms are trained, tested, and integrated into a production environment. Reducing this time allows you to get results faster, test hypotheses, and adapt models to new data.

These three factors determine the effectiveness of engineering solutions in AI. The balance between high productivity, cost optimization, and modeling speed enables companies to accelerate development and gain a competitive advantage in the market.

FAQ

Why is automated AV labeling important for development time and model performance?

Automated data labeling reduces development time and improves model performance, enabling the rapid creation of significant, consistent datasets.

How is labeled data defined for different sensor types?

Labeled data for different sensor types is defined by adapting annotations to each sensor's specificities. For example, bounding boxes and semantic segmentation for cameras, 3D objects for LiDAR, and motion trajectories for radar.

How does multimodal data improve perceptual systems?

Multimodal data improves perceptual systems by combining information from multiple sensors (cameras, LiDAR, radar, etc.) to provide robust, contextually aware interpretation of the environment.

What is human-in-the-loop (HITL) validation, and why is it needed?

Human-in-the-loop (HITL) validation is the process by which an expert monitors or corrects AI solutions to improve the accuracy and reliability of models, especially in complex cases.

How do automated workflow agents improve consistency and throughput?

Automated workflow agents improve consistency and throughput, coordinate tasks, enforce annotation standards, and optimize the division of labor between annotators.

What quality assurance practices scale for large datasets?

Multi-level validation, consensus checks, and automated rule-based quality assurance detect common errors.

What platform features should teams evaluate for large-scale labeling?

Look for multimodal support, pre-labeling and active learning, robust quality assurance tools, role-based access, project dashboards, and integration hooks for machine learning pipelines.

What key performance indicators (KPIs) should be tracked to measure labeling success?

Key performance indicators (KPIs) include annotation accuracy, inter-annotator agreement, data processing speed, proportion of errors verified, and dataset coverage.

Comments ()