Self-Supervised Learning for AV Systems

Creating reliable autonomous driving systems requires processing colossal volumes of data; however, the main "bottleneck" of the industry remains the process of their annotation. Unlike classical computer vision, labeling for autonomous vehicles is a multidimensional task where every object must be synchronized in space and time. The high cost of this process is due to the need for simultaneous processing of data from 3D lidars and an entire network of cameras, which requires annotators to have skills in working with complex spatial software.

Since a labeling error can lead to an accident in the real world, the accuracy requirements for annotations are extremely high, which entails multi-stage audit and verification cycles. Today, training a safe autopilot requires millions of frames labeled with consideration of many factors. Given that the cost of an hour of manual annotation remains high and human resources are limited, the industry faces an urgent need for methods capable of reducing this burden without losing training quality.

Quick Take

- SSL technology utilizes the internal logic of video and sensors to create its own training tasks.

- Efficiency is achieved through scene comparison, future prediction, part restoration, and sensor fusion.

- The combination of mass self-learning with delicate manual tuning ensures a balance between speed and safety.

- In the future, cars will be able to adapt to new conditions in real time, instantly exchanging experience with other vehicles.

Nature and Advantages of Self-Learning

Artificial intelligence is increasingly learning in the same way as a human – through observation of the world. Instead of waiting for prompts from teachers, the model analyzes the environment and independently finds logical connections between objects. This allows for the use of vast amounts of information that previously simply sat idle on servers due to the lack of manual labeling.

The Essence of Self-Supervised Learning Technology

The core idea of self-supervised learning is that the machine creates training tasks for itself based on existing data. For example, a model may intentionally hide part of an image and then try to guess what was drawn there. Since it has the original frame, it instantly checks itself and corrects errors without human intervention. This approach is called unsupervised pretraining because it allows the intelligence to be prepared for work even before specialists begin setting real labels.

To better understand, it is worth comparing this method with other training approaches:

Why SSL Works Ideally for Autonomous Vehicles

Autonomous cars are the best candidates for implementing label-efficient learning methods due to the specific structure of their sensors. Every trip creates natural prompts for the neural network that do not need to be invented artificially. The model sees the world simultaneously from different angles and through different devices, allowing it to verify its knowledge through the physical logic of space.

The main sources of signals for such training include:

- Temporal sequence. Video shows how objects move over time, which helps AI understand inertia and the constancy of objects.

- Multimodality. Data from cameras and lidars complement each other; thus, the model learns to recognize 3D objects by comparing an image with a point cloud.

- Physical consistency. The laws of gravity and the impossibility of cars suddenly disappearing provide the AI with a clear understanding of the rules of the real world.

Thanks to these factors, an autonomous vehicle learns to understand the road much faster and with higher quality. The machine begins to "feel" the space around it based on its own observational experience, making its knowledge much deeper than with the simple study of ready-made images.

Main SSL Approaches in AV

The transition from classical labeling to self-supervised learning methods became the main technological shift in autonomous vehicle development in 2026. Instead of relying on human prompts, SSL architectures use the internal logic of the data itself as a "training signal". This allows models to independently master complex concepts of geometry, physics, and motion, transforming petabytes of raw road recordings into structured knowledge.

Main approaches in this field are based on solving so-called "pretext tasks". The model learns to recognize objects by comparing frames over time, restoring hidden image fragments, or aligning data from different sensor types. This approach creates much more robust neural networks capable of understanding context even in the most unpredictable road situations.

Contrastive Learning

This method is based on the model's ability to compare different views of the same scene. The AI receives two slightly modified angles of a single frame and learns to understand that it is the same object. Simultaneously, the model tries to "push away" images of completely different objects in its mathematical space. This helps the neural network extract the most important features of objects without paying attention to random visual noise.

Temporal Prediction

The video stream provides a unique opportunity to learn from the chronological sequence of events. The model receives a series of previous frames and tries to predict what the next frame will look like or what a pedestrian's trajectory will be in a second. Since the model already has the real recording of what actually happened, it uses it as a free reference answer. Such training develops the system's ability to understand the physics of motion and predict potentially dangerous situations before they occur.

Masked Modeling

In this approach, we intentionally "spoil" the input data: we cover part of an image or remove part of a point cloud from a lidar. The AI's task is to restore the lost fragments based on the context of what remains visible. To successfully complete a part of a sign or a fragment of a car, the model is forced to learn how these objects are constructed in reality. This creates a deep understanding of the world's structure, where the machine learns to recognize a whole object even if it sees only a part of it.

Cross-Modal Learning

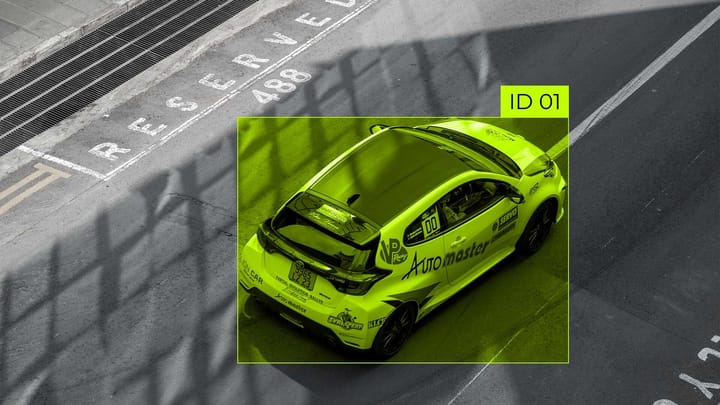

Autonomous vehicles have the advantage of different sensor types, which allow for cross-modal learning. The system compares data from cameras and lidars. The model learns to align these streams: it understands that a flat patch on a photograph and a convex set of points in space are the same truck. This "cooperation" of sensors allows the camera to learn depth perception, and the lidar to better distinguish object categories.

Hybrid Strategy

In 2026, complete abandonment of human labeling is not yet possible; however, the human role has fundamentally changed. SSL takes on the majority of the "grunt work" of learning visual features, leaving experts with only the final stage of training and the verification of the most complex scenarios. This hybrid approach has become the gold standard, combining the speed of artificial intelligence with the reliability of human control.

Practical Pipeline: SSL + Supervised

The modern development process is built around the idea of unsupervised pretraining on gigantic volumes of data. First, the model "watches" millions of hours of video without a single label, learning to understand what roads, shadows, and movement in space look like. Once the knowledge base is formed, the stage of working with a small but perfectly labeled dataset begins.

The refinement process looks like this:

- Massive SSL Pretraining. The model learns on petabytes of raw data, forming a general understanding of the world.

- Fine-tuning. Precise tuning on thousands of frames labeled by specialists manually to teach the AI specific rules.

- Iterative Improvement. The system finds frames in which it is uncertain and sends them to annotators for clarification, constantly increasing its accuracy.

This chain makes training extremely efficient, as every human label now provides the model with more useful information.

Impact on Safety and Certification

Although SSL significantly reduces dependence on expensive labeling, it does not remove responsibility for safety. Certification of autonomous vehicles requires clear proof that the model actually recognized a pedestrian's movement. Since self-learning methods can sometimes learn incorrect patterns, quality control remains a priority.

Important aspects of certification in the SSL era include:

- Validation of downstream models – final testing of the system on independent tests that were never used during self-learning.

- Analysis of internal representations – checking whether the AI correctly "understands" the geometry and volume of objects through methods of visualizing its "thoughts".

- Data bias control – ensuring that a model trained via SSL is equally safe anywhere.

The Future of SSL in Autonomous Systems

The future of autonomous systems lies in making them living organisms that learn every second they are on the road. Thanks to SSL, autonomous vehicles stop being static programs that require constant updates "from the cloud". They transform into intelligent systems capable of self-evolving by analyzing every new situation they encounter in real time.

Continual Learning and Multimodal Foundation Models

Traditionally, models are trained once and remain unchanged until the next release. However, the concept of continual learning changes this approach: cars learn constantly without forgetting old skills. When one autonomous vehicle encounters a unique situation, it uses SSL to understand it and shares this experience with the entire network. This creates self-improving fleets – fleets where the experience of one machine instantly becomes the asset of thousands of others.

One of the most complex tasks is online adaptation – the AI's ability to instantly adjust to new conditions, such as a sharp transition from sunny weather to a snowstorm. SSL allows the model to adapt its "vision" on the fly, analyzing changes in data structure without waiting for new instructions from developers.

The centerpiece of this future is multimodal foundation models for AV. These are gigantic neural networks pretrained on colossal arrays of video, text, and sensor data. They possess a "general understanding of the world" and know that a ball rolling onto the road often means a child running after it. SSL is the key to creating such models, as it allows them to absorb knowledge about physics and human behavior boundlessly, not limited to what an annotator was able to label.

FAQ

How exactly does SSL help the model distinguish objects in fog or heavy rain?

Thanks to cross-modal learning, the model knows that lidar "sees" through fog better than a camera, so it learns to combine these signals to create a stable image of the object.

Won't self-learning lead to the accumulation of logical errors without human supervision?

The risk exists, so SSL is used as a preparation stage, followed by a validation phase on control tests. "Contrastive learning" mechanisms are also implemented to cut off illogical connections.

What specific computing power is needed for the pretraining stage in 2026?

Processing petabytes of data requires specialized AI clusters with thousands of GPU cores working for weeks. However, these costs are still significantly lower than paying thousands of annotators for the same period.

How does SSL affect the autopilot's ability to understand traffic controller gestures?

While SSL learns the physics of motion itself, specific social signals like gestures require a final Supervised Learning stage. However, the model already knows the structure of the human body, so it learns gestures much faster.

Does SSL work for predicting the behavior of other human drivers?

Yes, through temporal prediction, the model analyzes millions of hours of video of real human behavior. This allows it to learn patterns of aggressive or hesitant driving without special marks in the dataset.

How is the problem of "forgetting" old skills solved in continual learning?

Engineers use "rehearsal" techniques, where the model periodically checks its knowledge against a base of critical archived scenarios.

Will SSL replace traditional methods of programming autonomous vehicle logic?

SSL replaces only the "perception" and "prediction" parts, but decision-making logic often remains hybrid, combining deep neural understanding with hard-coded ethical and safety rules.

Comments ()