Long-Tail Scenarios in Autonomous Driving: Handling Rare Events & Edge Cases

Most modern algorithms successfully cope with typical road situations, but the real challenge is rare scenarios - rare events that occur extremely rarely, but can have a critical impact on safety. These situations form the so-called statistical long tail distribution of events, where a significant part of the possible scenarios occur rarely, but they cannot be ignored.

The development of autonomous vehicles that can reliably respond to rare scenarios and corner cases requires integrating large amounts of data, simulations, and advanced modeling and forecasting methods.

Key Takeaways

- High-value data clips can yield a large sampling dividend versus raw miles.

- Simulation helps, but only when seeded with authentic, labeled clips to avoid drift.

- Industry practices like AI tagging and uncertainty-driven loops close the capture-to-OTA gap.

- Metrics should reward tail-aware evaluation and cost per useful event for better safety outcomes.

Industry approaches to long-tail scenarios: smart data, tagging, and domain adaptation

To effectively handle rare scenarios and corner cases, the autonomous driving industry employs several strategies to compensate for the limitations of conventional training datasets. One of the main approaches is the use of smart data — selective, high-quality data that maximally covers the statistical long tail of events. Instead of collecting huge volumes of standard data, engineers focus on rare or critical scenarios that provide the greatest increase in system safety.

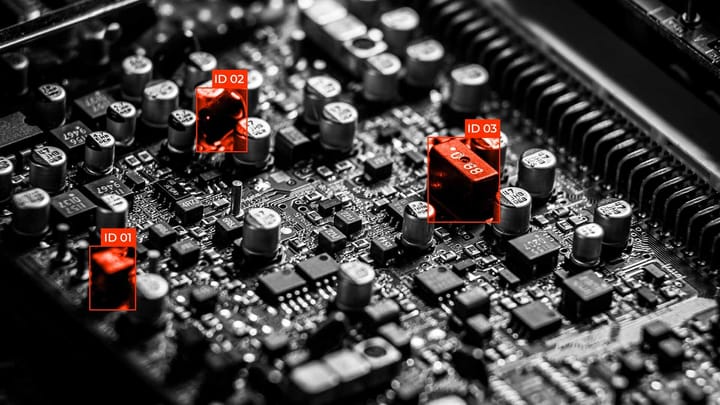

A key tool in this process is tagging — the precise labeling and classification of events in datasets. Thanks to careful tagging and categorization, algorithms can more accurately respond to atypical situations and predict the behavior of road users in complex scenarios.

Domain adaptation — a technique for adapting models to new conditions where there is a distribution mismatch between the training data and the real environment. For example, a model trained on clear-weather data in a city may not perform well in rain, snow, or on other types of roads. Domain adaptation enables transferring knowledge from one domain to another, reducing the risks associated with rare scenarios and increasing the system's resilience to unexpected events.

Long tail scenarios, autonomous driving, rare events: intelligent data engines in practice

In the practice of autonomous driving, processing rare scenarios and corner cases requires not just large amounts of data, but also intelligent processing. Intelligent data engines are systems that automate the collection, classification, annotation, and management of data, optimizing the training of models for statistical long-tail events.

In addition, such platforms support flexible tagging, enabling autonomous driving systems to accurately identify corner cases and predict the behavior of road users in complex, unpredictable situations. Combined with domain adaptation methods, intelligent data engines help create models that can act safely even in scenarios that rarely occur in real life but can be critical for safety.

Simulation as amplifier, reality as seed: building trustworthy scenario coverage

Metrics and partnerships that move safety, not vanity

Summary

Autonomous driving faces a complex challenge: reliably responding to rare, atypical road events that create long-tail scenarios. An effective solution lies not only in increasing the amount of data, but also in intelligently managing it: intelligent data engines automatically identify, label, and prioritize critical scenarios for training models.

Successful industrial practice combines real-world data and simulations, ensuring a balance between reliability and scale of coverage of rare scenarios. The use of domain adaptation and constant feedback helps reduce distribution mismatch, increasing the reliability of algorithms in unpredictable conditions.

FAQ

What are long-tail scenarios in autonomous driving?

Long-tail scenarios are rare and atypical driving situations that occur infrequently but can have critical safety implications. They represent the statistical long tail of real-world events that standard datasets may not fully cover.

Why are rare scenarios challenging for AV systems?

They are difficult because models are often trained on common driving conditions, leading to a distribution mismatch when encountering unusual events. This increases the risk of incorrect decisions in corner cases.

How do intelligent data engines help in handling rare events?

Intelligent data engines automate the collection, tagging, and prioritization of critical scenarios, ensuring that models learn effectively from rare scenarios without relying solely on massive datasets.

What is the role of simulation in long-tail coverage?

Simulation acts as an amplifier, generating corner cases and rare events at scale. It allows testing hazardous or unusual scenarios safely while extending coverage beyond the limits of real-world data.

Why is reality considered the “seed” in scenario development?

Real-world data provides high-fidelity examples of actual driving behavior and rare scenarios, forming the foundation for training and guiding simulations. Without this seed, synthetic scenarios may diverge from reality, causing a distribution mismatch.

How does domain adaptation improve AV safety?

Domain adaptation helps models generalize from training data to new conditions, reducing distribution mismatch. It ensures that AVs can handle unexpected, rare scenarios in diverse environments.

What is the importance of tagging in AV datasets?

Tagging identifies and labels corner cases and rare scenarios, allowing models to focus on critical events. Proper tagging improves model accuracy and ensures coverage of the statistical long tail.

How do industry partnerships enhance scenario coverage?

Partnerships allow sharing edge-case data and best practices, expanding the range of rare scenarios covered. Collaborative efforts reduce blind spots and accelerate the safe development of AVs.

Which metrics truly reflect AV safety rather than vanity?

Metrics should measure the ability to handle corner cases, cover rare scenarios, and reduce near-misses. Metrics like total miles driven are often misleading if they do not address the statistical long tail of events.

What is the combined strategy to handle long-tail scenarios effectively?

The most effective approach combines real-world seeds, simulation amplification, intelligent data engines, tagging, and domain adaptation. Together, they address rare scenarios, distribution mismatch, and corner cases, ensuring robust and trustworthy AV performance.

Comments ()