Domain adaptation for autonomous driving

Domain adaptation for autonomous driving provides the ability for models to generalize knowledge across datasets and conditions. Due to domain discontinuity and distribution shift, a model trained on one dataset may lose accuracy in new environments. The use of transfer learning methods and feature alignment strategies improves cross-data generalization, reducing the risk of errors in real-world driving scenarios and increasing the reliability of autonomous systems.

Key Takeaways

- Performance degrades when models encounter new weather conditions, sensor settings, or camera settings.

- Image- and object-level alignment, along with adversarial analysis, improve detection in fog and rain.

- Cross-validation of datasets and normalized metrics is essential to measure true transfer.

What is domain adaptation?

Domain adaptation for autonomous driving provides the ability for models to achieve cross-dataset generalization across datasets and conditions. Due to domain discontinuity and distribution shift, a model trained on one dataset may lose accuracy in new environments. The use of transfer learning methods and feature alignment strategies improves cross-dataset generalization, reducing the risk of errors in real-world driving scenarios and increasing the reliability of autonomous systems.

In the field of autonomous driving, this is necessary because car perception systems are trained on specific datasets. Yet, they encounter diverse road types, weather conditions, vehicle types, lighting conditions, and urban infrastructure in the real world.

The main goal of domain adaptation is to reduce the difference between the source domain on which the model was trained and the target domain on which it must operate. Without such adaptation, the accuracy of computer vision systems decreases, and errors in recognizing pedestrians, road signs, or obstacles can lead to dangerous situations.

Ways to implement domain adaptation

There are several main ones:

- Feature-level adaptation, in which the model is trained to extract universal features that generalize well across environments.

- Data-level adaptation, in which the data is modified or augmented to better align with the target domain.

- Unsupervised domain adaptation, where the model is adapted to a new domain without any labeled data, using only the raw images from the car's cameras.

An important tool is the use of simulation environments, which allow scenes to be generated under different weather conditions, lighting, and traffic scenarios. The models are then trained to transfer knowledge from synthetic data to real-world data. In modern autonomous driving systems, domain adaptation is combined with transfer learning, self-supervised learning, and continual learning, allowing cars to improve their models during operation gradually.

Cross-dataset evaluation

Cross-dataset evaluation is an approach to verifying the quality of models and datasets by testing them on independent datasets. This method is robust because it shows how well the model generalizes and whether it performs consistently across different conditions.

Just because a model performs well on one dataset does not mean it will perform well in the real world. Cross-dataset evaluation allows you to check whether the results are consistent across new data. If a model performs similarly across multiple independent datasets, it indicates that the algorithm is robust and generalizable.

Evaluation protocols, label matching, and data pipelines

An important part of this approach is evaluation protocols, which define the rules for using different datasets for training, validation, and testing. Clear protocols allow you to compare results across runs, ensuring experiment reproducibility.

Another important aspect is label alignment. Different datasets may use different annotation schemes: classes may be named differently, have different levels of detail, or have different labeling rules. Therefore, before comparative evaluation, it is necessary to align these labels so that models are evaluated according to the same categories. To do this, create common class taxonomies or use category mappings.

Data pipelines play an important role. They include data preparation, format standardization, image or text normalization, and unified procedures for running models. If pipelines differ between experiments, the results may be incorrect or incomparable. Therefore, many modern studies use standardized evaluation pipelines.

Evaluation metrics

To summarize the results, key metrics and aggregate scores are used to evaluate model performance across different datasets and compare them.

Weather-induced domain gaps: foggy and rainy scenarios

Weather-induced domain gaps: foggy and rainy scenarios are among the important problems that arise from differences between the conditions in which the model was trained and the real-world weather conditions on the road. Datasets for training autonomous driving models contain a large number of images taken in good weather conditions - in daylight, on dry roads, and with high visibility. However, in real-world environments, vehicles often encounter fog, rain, wet road surfaces, or reduced scene contrast. This creates a domain gap between the training data and the system's operating conditions.

Image-level adaptation

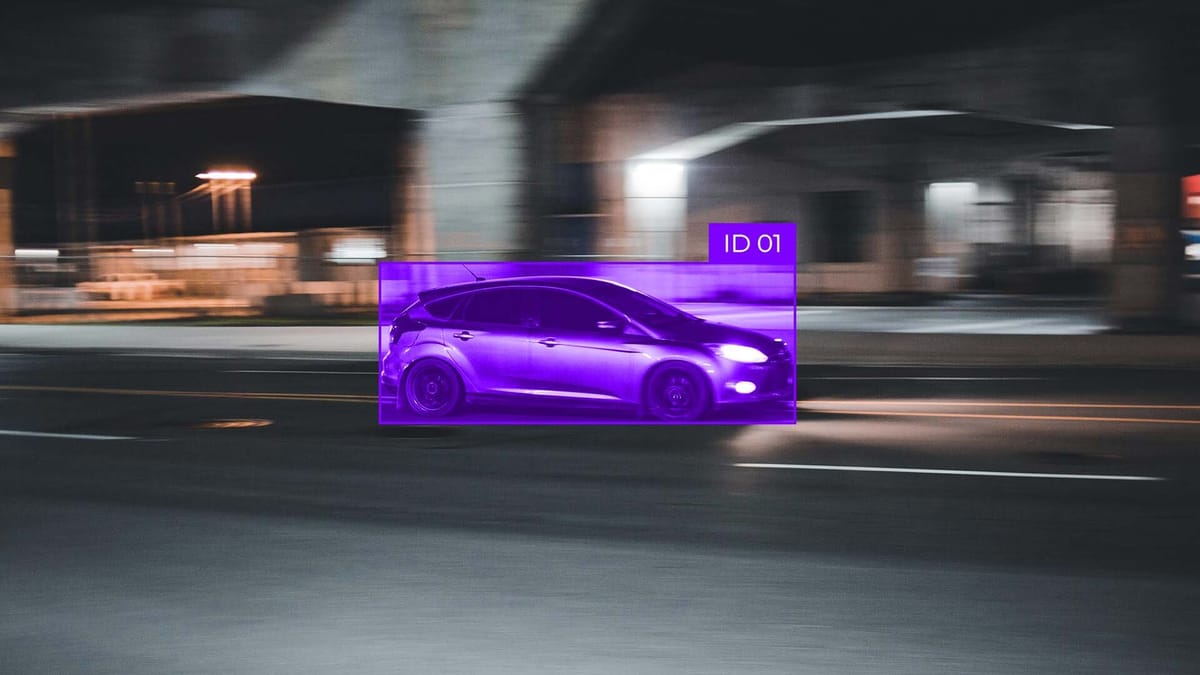

Image-level adaptation is a domain adaptation technique that reduces differences between data from different sources by matching common visual characteristics across images.

In this approach, the focus is on the general representation of the image, which is formed in the deep layers of the neural network. During training, the model aims to learn features useful to both the source and target domains. This is achieved by aligning the distributions of features obtained from images from different datasets. If the statistical properties of these features become similar, the model is easier to apply to new data without losing accuracy.

Global feature alignment is implemented using special training strategies, such as additional discriminant networks or loss functions, which force the model to make the representations of the source and target images as similar as possible. That is, the system is trained in such a way that it is difficult to determine which domain a particular feature representation comes from.

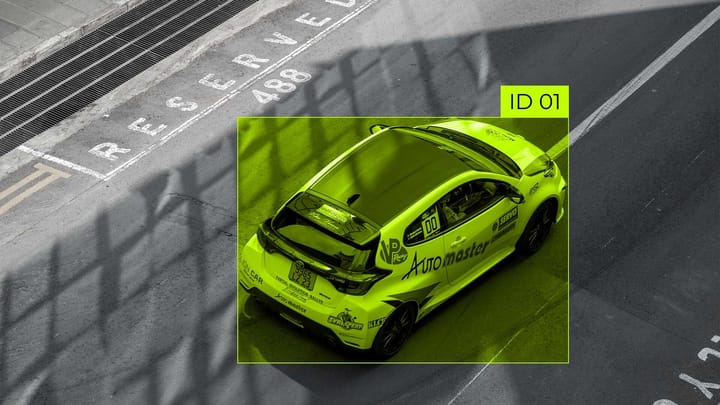

Object-level adaptation: instance-aware feature alignment

Object-level adaptation is a domain adaptation method that focuses on individual objects or instances present in a scene. In autonomous driving, the system needs to recognize pedestrians, cars, cyclists, and road signs under all conditions. In global feature adaptation, the model only matches the general statistical properties of the images across domains, which does not guarantee the correct detection and classification of individual objects in new conditions. Object-level adaptation helps the model learn to distinguish and correctly interpret each instance regardless of the domain in which it appears.

A key tool in this approach is ROI (Region of Interest) based domain classifiers. They estimate which domain a specific object within a selected region belongs to, rather than the entire image. This estimation allows the system to focus on specific instances and ensure accurate feature alignment between the source and target domains. Using ROI classifiers, the model learns to create representations of each object that are invariant to changes in background, lighting, or weather conditions. Another important element is the alignment of instance-level distributions. This method forces the model to generate features for individual objects in different domains that have similar distributions in feature spaces. This allows the model to avoid detection or classification errors caused by domain shifts and to improve recognition stability in the real world.

Backward gradient modeling and complex example analysis

In autonomous driving tasks, for example, a model may perform well with typical objects and conditions, but fail when encountering atypical situations. Backward gradient modeling helps the system learn to generalize and become more robust to such complex examples.

The idea of the method is to use the gradient signal to align features between the source and target domains in such a way that the model not only learns to classify objects correctly, but also forms features that are invariant to domain differences.

During backward gradient-based training, the model simultaneously optimizes two goals: improving performance on the detection or classification task and reducing the discriminator's ability to determine the domain of an example. This allows the system to create generalized representations of the data that work well in new, unknown conditions.

Special attention is paid to complex instances in the target area; for such cases, the AdvGRL (Adversarial Gradient Reversal Learning) method is used. AdvGRL strengthens the influence of complex examples on the learning process, forcing the model to incorporate them into feature formation actively. This helps improve the accuracy of detecting atypical objects and increases the model's stability in real road conditions, where unexpected situations occur more often than in controlled datasets. All this enables autonomous driving systems to adapt to diverse domains, including challenging weather conditions and non-standard traffic situations. Models become stable, capable of generalization, and less dependent on the specifics of specific datasets. As a result, the safety and reliability of autonomous transport systems in real conditions, where complex and unpredictable scenarios arise, are improved.

FAQ

Why does testing a single dataset overestimate real-world performance?

Testing a model on only one dataset does not account for the variety of scenarios, conditions, and domain differences encountered in the real world.

What metrics best reflect the robustness of the transfer beyond mAP?

In addition to mAP, the robustness of the transfer domain is reflected in the robustness score, cross-dataset accuracy, F1-score on the target domain, and domain adaptation gap metrics that measure the model's generalization to new conditions.

What components help mitigate the performance degradation caused by weather conditions such as fog and rain?

Domain adaptation, global and local feature alignment, synthetic data, and backward gradient learning techniques help mitigate the performance degradation in fog and rain.

How is image-level alignment different from object-level alignment?

Image-level alignment aligns global characteristics of the scene, while object-level alignment focuses on local features of individual instances.

How effective are synthetic or auxiliary data in improving the transferability of models to adverse conditions?

Synthetic and auxiliary data can improve the transferability of models to adverse conditions by providing additional examples of scenarios that are missing in real-world datasets.

Comments ()