Anchor-based object detection: Parameterizing 3D bounding boxes for audiovisual perception

Anchor-based object detection is a key approach in modern computer vision systems. It is used in complex perceptual environments, particularly in audiovisual systems and autonomous vehicles. In such models, anchor boxes serve as reference frames for predicting object positions in space.

For three-dimensional scenes, 3D box parameterization plays an important role, allowing the compact description of the object's location, dimensions, and orientation. Additionally, orientation encoding mechanisms are used to represent the object's rotation angle, and dimension labeling is used to accurately determine its geometric characteristics. Together, these components provide accurate localization and interpretation of objects in complex multimodal environments.

Key Takeaways

- Predefined templates provide efficient points for predicting end blocks in feature maps.

- Offset and logarithmic terms transform templates into precise image space coordinates.

- K-means for labels helps to choose template sizes and aspect ratios that match real objects.

- Normalizing regression targets to standard deviation values stabilizes training and speeds up convergence.

- 3D extensions add depth and direction to templates.

Bounding box parameterization for anchor-based object detection

In anchor-based object detection models, bounding boxes are parameterized so the model can predict the exact position and size of the object relative to specified reference frames, or anchors. This approach transforms the object localization problem into a regression problem, where a neural network predicts the displacement between the anchor frame and the object's bounding box.

Centroid parameterization and representation in terms of angles or min-max coordinates

These are common ways to describe a bounding box's coordinates. In centroid format, a box is defined by the coordinates of the center (x,y) and the dimensions (w,h), where x and y are the coordinates of the centroid, and w and h are the width and height.

Another common format is the angular or min-max representation, where the rectangle is defined by the coordinates of its upper-left and lower-right corners (xmin, ymin, xmax, ymax).

Both formats are easy to convert between and to use at different data processing stages.

Offset regression targets

Offset regression targets play a key role in anchored models. The network calculates the offset between the anchor frame and the real bounding box. Typically, regression targets are defined as normalized differences between centers and logarithmic ratios of sizes. For sizes, the logarithm of the ratio of the object's width and height to the corresponding anchor parameters is used. This form of regression stabilizes training and helps the network handle objects of different scales.

Bounding box encoding and decoding, and variance

To enable the model to work with these parameters, bounding box encoding and decoding procedures are used. During training, the coordinates of the real object are converted into relative displacements with respect to the anchor. This process is called encoding. After prediction, the network generates the same displacement parameters, which are decoded back into the real bounding box coordinates in the image during inference. Thus, the system moves from relative values to the object's actual location. An additional element of this parameterization is variance. It is used as a scaling factor during coordinate encoding and decoding. Variance controls the magnitude of the displacements that the model predicts and helps stabilize the training process. In effect, it limits the range of possible coordinate changes, reducing the risk of unstable gradients and improving optimization convergence.

From images to feature maps

Convolutional highways convert images into composite feature maps. Each map has a step that sets the spatial granularity. Smaller step maps localize small targets. Larger step maps cover large targets.

Why place patterns on the maps? Feature maps align the location of the propositions with the receptive fields. This reduces work per pixel and reduces memory and latency compared to dense image sliding.

Decoding predictions at different levels, followed by NMS, reduces duplicates and improves accuracy.

Region proposal network pipeline

In modern object detection systems, the proposal and prediction flow plays a key role, determining how the model identifies potential object regions and converts them into final detections. Such a pipeline consists of several stages: generating candidate regions, predicting classes and bounding box coordinates, and filtering duplicate results. Depending on the detector architecture, these stages are implemented differently. Still, the most common mechanisms are the Region Proposal Network (RPN) in two-stage models, YOLO-style grid predictions in single-stage detectors, and Non-Maximum Suppression (NMS) for final result selection.

In two-stage detectors, such as Faster R-CNN, the first component of the pipeline is the RPN, which generates region proposals, which are the image regions where the objects are located. The rest of the network then classifies these regions and refines the bounding box coordinates. In single-stage models, proposition generation and classification are performed simultaneously by predicting the bounding box from a regular feature grid. Regardless of the architecture, the final stage is usually the NMS, which eliminates duplicate bounding boxes and keeps only the most likely detections.

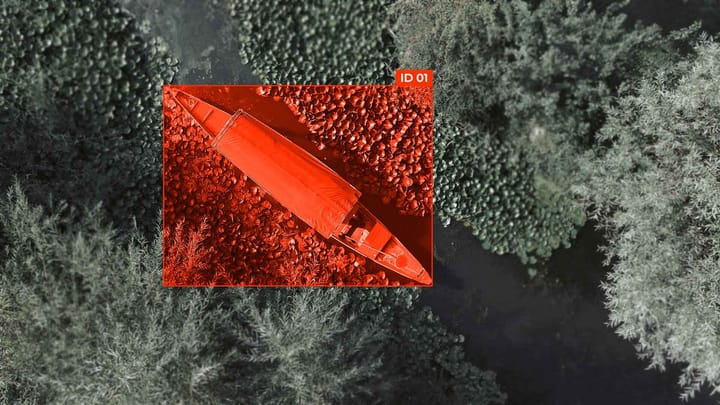

Parameterizing 3D bounding boxes for autonomous vehicles

Parameterizing 3D bounding boxes in autonomous vehicles enables the precise determination of the position, size, and orientation of objects. 3D bounding boxes describe objects in world or sensor coordinates, which is necessary for tasks such as trajectory planning, obstacle avoidance, and prediction of the movement of other road users.

Typically, a 3D bounding box is described by a set of parameters that include the object's center coordinates (x, y, z), its dimensions (l, w, h), and its orientation in space. The center coordinates define the object's position relative to the sensor or vehicle coordinate system. The length, width, and height parameters specify the geometric dimensions of the object, allowing the model to account for different types of objects, such as cars, pedestrians, or cyclists. Orientation is described by the angle of rotation around the vertical axis (yaw), which indicates the direction of movement or the object's location relative to the road.

During training, neural networks do not always directly predict these parameters. Often, regression of relative displacements relative to reference 3D anchor boxes or previous estimates of the object position is used. The model involves center displacement, size scaling, and orientation correction to adapt the bounding box to the real object in the scene. To stabilize the training, coordinates and sizes can be transformed into logarithmic space.

Feature of 3D parameterization

A feature is the need to account for the data source. In autonomous driving systems, 3D bounding boxes are predicted from lidar data, stereo cameras, or multi-sensor combinations. In lidar, object coordinates are determined in 3D from a point cloud. For cameras, the model must also estimate scene depth, which complicates the task and requires the use of geometric constraints or depth-restoration methods.

Thus, parameterizing 3D bounding rectangles provides a compact, standardized way to describe objects in a 3D environment.

Reducing or eliminating anchors: anchorless and hybrid methods

In modern object detection models, there is a trend to reduce or completely abandon the use of anchors. Traditional anchor-based detectors use a large number of defined bounding boxes of different scales and proportions, which complicates model tuning and increases computational costs.

To simplify the architecture and reduce the number of hyperparameters, there are anchor-free and hybrid approaches. Anchorless methods completely abandon predefined anchor boxes and directly predict object positions from the feature map. Hybrid methods combine elements of anchor-based and anchor-free approaches, leveraging mechanisms from both to improve training accuracy.

FAQ

What is the difference between anchors and predicted boxes in image detectors?

Anchors are predefined candidates for objects, while predicted boxes are object coordinates that the network obtains by regression from anchors or from a feature map.

How are the offsets relative to the template box encoded and decoded at output?

The offsets of the template box are encoded as normalized center-to-center distances and logarithmic size ratios. At output, these values are decoded back into the coordinates of the actual bounding box.

How are anchor sizes and aspect ratios chosen for a dataset?

Anchor sizes and aspect ratios are chosen based on the statistics of object sizes and proportions in the training dataset to cover a variety of shapes and scales.

Where are templates used in modern detectors - on raw images or feature maps?

In modern detectors, anchors are applied to feature maps rather than to raw images.

How do RPN-style and YOLO-style flows differ when using templates?

RPN-style flows generate candidate regions relative to anchor templates for further classification. In contrast, YOLO-style flows predict bounding boxes and classes for each grid cell without a separate proposal stage.

How are 3D blocks parameterized for autonomous vehicle perception?

3D blocks for autonomous vehicle perception are parameterized by the object's center coordinates, dimensions (length, width, height), and orientation in 3D space.

Comments ()